A lot of attention was gained by Derek Sivers‘ post Offline 23 hours a day, the other week. I was particularly impressed by the rebuttal by Rishi Dass:

…

Anyway, the reasoning behind this idea of disconnecting seems to be that they equate being productive with having no internet or phone service. This implies that the tool (internet or the phone) is the problem. But is that entirely true?

They further argue that disconnection helps them create a vacuum through media silence, allowing their thoughts to expand and fill the space. While it’s understandable that you can concentrate better when your attention is focused on one thing, there’s no reason you can’t stay online and do the work. If you’re able to work comfortably in a library, you can do this.

…

Obviously, Derek’s approach is valid. It sounds like he’s found what works for him in terms of managing his time, life, mental energy, and the like, and that’s great! I’d be lying if I said that I didn’t envy him at least a little: don’t we all enjoy “unplugging” sometimes?

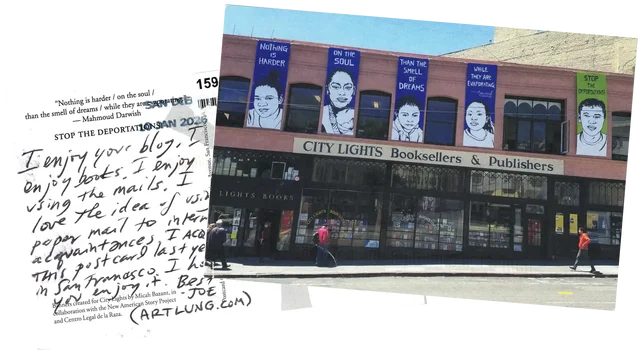

I think Derek’s post is so appealing because it touches our nostalgia of a simpler, less-always-online time.

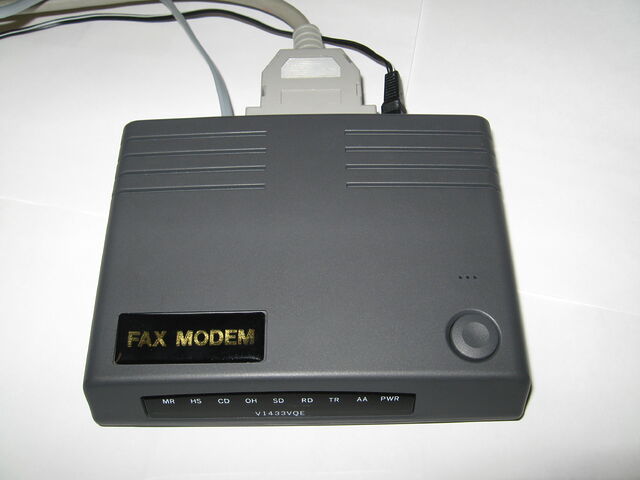

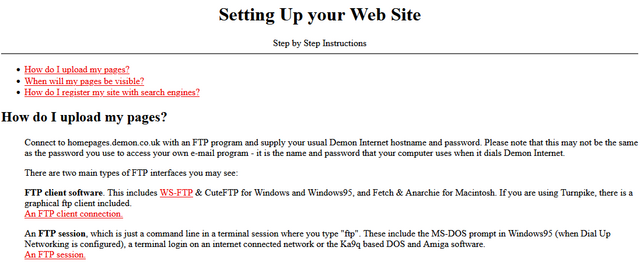

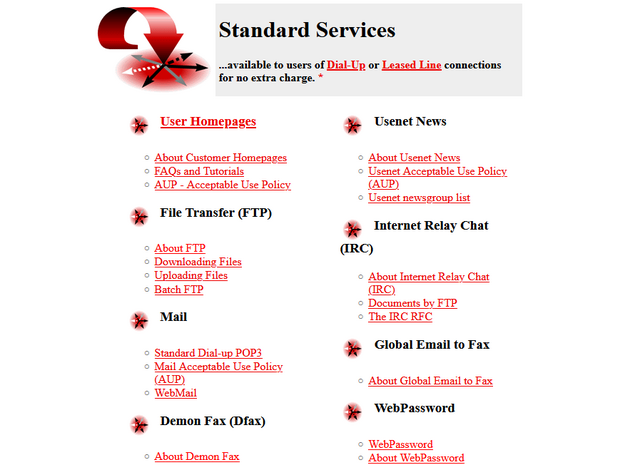

For a while I thought that this would be a sensation unique to folks who, like me, had their first experiences of the Internet in a very intermittent and deliberate way. In the 1990s, I used to go on the Internet: a premeditated act that required being somewhere with a landline and the appropriate hardware, requiring that nobody was using or intending to use the phone, booting up a computer, dialling-up to the local Internet Service Provider, and then going about what I wanted to do. At that time, it was uncommon to use the ‘net for trivial things like checking the weather or what’s on at the cinema, because picking up the local newspaper would probably be a faster way to achieve that! Similarly, it wasn’t so-useful as a procrastination activity, because picking up a book or going for a walk was more accessible and reliable.

But this isn’t a generational thing, or at least not entirely. Gen Zs are seeing the joy in retro tech from before they were born, which is something I’ve witnessed myself: I’m part of a couple of online communities that do quite a bit of retro-Web and other retro-tech stuff, and I’ve been amazed at how young the demographics can skew in some of these groups! Like: there are people who were born after Facebook was founded who yearn to recreate the kind of dial-up experience that I had, before their parents met.

(Obviously, I think this is great; I think there are great lessons to be learned from the more open, decentralised, distributed, transparent, and exploratory Internet of times gone by. It just… initially surprised me to find so many younger folks showing such an interest in it, too.)

I still think this is nostalgia, though. Here’s why: none of us are born with unfettered and unfiltered access to the Internet. Unless they have the most hands-off parents possible, even a child born today won’t be “always online” for the first decade or more of their life. And being a child, for most folks, is a time of safety and wonderment: where there are other people to attend to our needs and filter our information intake and answer our questions in a protected environment. Growing up, we all have to learn to do those things for ourselves. And in the information-saturated attention economy of the modern world, that shit is exhausting.

You don’t need to be reminiscing about dial-up to fantasise about a slower time, when pub quizzes couldn’t be cheated by a shithead in the corner unless you catch them in the act and when your pocket computer wouldn’t beep for attention every 30 seconds because a half-remembered friend posted a holiday snap. Not having the extra cognitive load all the time is liberating!

No wonder “going offline” seems like a luxury to people, and why Derek’s extreme approach is so intriguing! But it’s just the same as that curated holiday snap that your friend-of-a-friend just posted to Instagram: it’s a snapshot into the best bits of somebody else’s life. It’s not reality. It’s your imagination, your fantasy, projected onto somebody else’s solution. “This works for them,” you say to yourself, “It must work for me, too!”

Maybe it would! And I hope that a few people feel empowered by Derek’s post to fulfil their dream and go live in the woods. Good for them!

But Rishi’s rebuttal brings us a sense of balance. For most people, it’s not necessary to go live in the woods to “go offline”. If you really want to, just… go offline. The power’s in your hands.

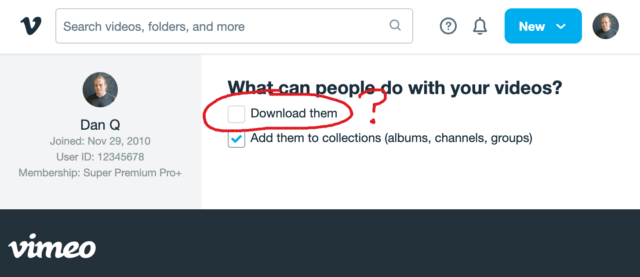

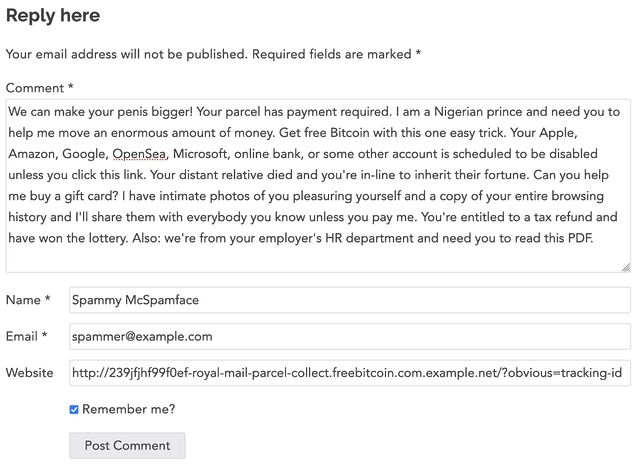

- if you don’t want to be distracted by social media and games, close those accounts and take those apps off your device

- if you don’t want to be interrupted by notifications, switch them off and check your inputs on your own schedule

- if you don’t want to be online at all, set airplane mode or disconnect from the WiFi, and narrow your focus onto that book, board game, film, conversation, or daydream

- if you don’t trust yourself not to be tempted to backslide… well, that’s a bigger problem of self-control that you need to work on, but in the meantime, try and experiment: leave your device behind and take a walk!

I get wanting to disconnect. I have my own controls in place, too, and they’re great for my mental health. But my approach, Derek’s approach, anybody’s approach… don’t have to be your approach.

Start the journey by working out what parts of the always-online world aren’t serving you. What things are more of a psychological drain than a boost? What’s bad for your mental wellbeing on the whole (not just in the moment)? What habits would you like to kick? What excuses are you using to keep them?

Then, work out what you can do about them. Seek assistance if you need it; you might not have all the solutions. But beware the seductive approach of taking what works for somebody else and trying to fit yourself to their mould.

Sure: maybe you need to go live in the woods with Derek. But make that choice because it solves your problems, not because it solves his!

![Stylish (for circa 2000) webpage for HoTMetaL Pro 6.0, advertising its 'unrivaled [sic] editing, site management and publishing tools'.](https://bcdn.danq.me/_q23u/2025/08/hotmetal-pro-6-640x396.jpg)