Man, I have missed having a battlestation to work at these last few months. It’s nice to sit at one again, even if it’s only a ‘chicory battlestation’.

Tag: computers

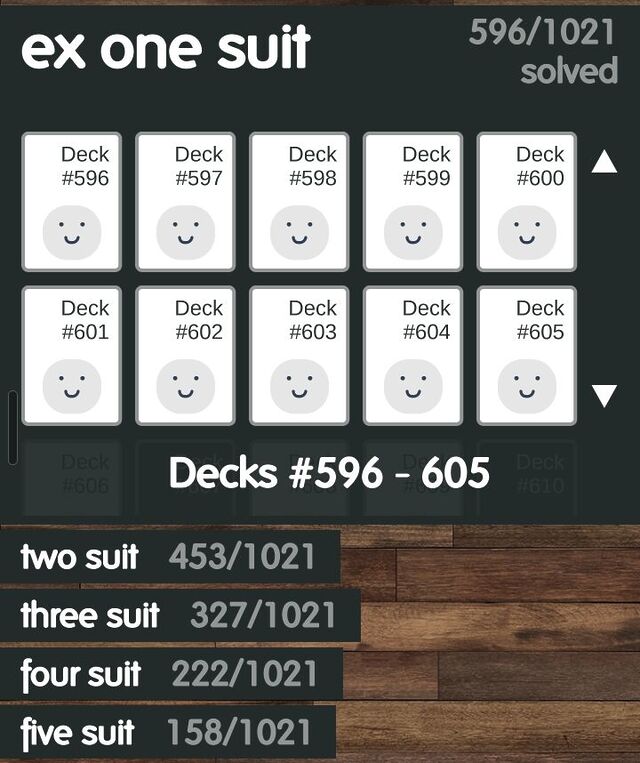

FlipFlop Solitaire’s Deck-Generation Secret

For the last few years, my go-to mobile game1 has been FlipFlop Solitaire.

The game

The premise is simple enough:

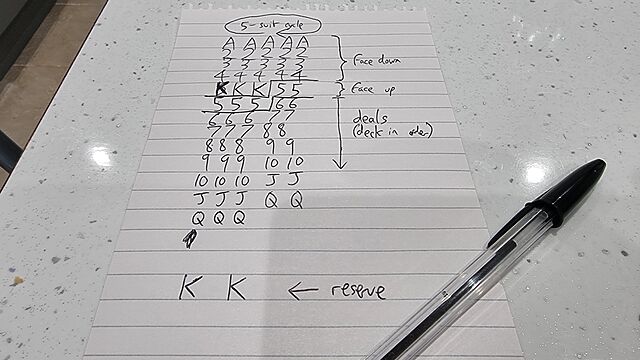

- 5-column solitaire game with 1-5 suits.

- 23 cards dealt out into those columns; only the topmost ones face-up.

- 2 “reserve” cards retained at the bottom.

- Stacks can be formed atop any suit based on value-adjacency (in either order, even mixing the ordering within a stack)

- Individual cards can always be moved, but stacks can only be moved if they share a value-adjacency chain and are all the same suit.

- Aim is to get each suit stacked in order at the top.

One of the things that stands out to me is that the game comes with over five thousand pre-shuffled decks to play, all of which guarantee that they are “winnable”.

Playing through these is very satisfying because it means that if you get stuck, you know that it’s because of a choice that you made2, and not (just) because you get unlucky with the deal.

Every deck is “winnable”?

When I first heard that every one of FlipFlop‘s pregenerated decks were winnable, I misinterpreted it as claiming that every conceivable shuffle for a game of FlipFlop was winnable. But that’s clearly not the case, and it doesn’t take significant effort to come up with a deal that’s clearly not-winnable. It only takes a single example to disprove a statement!

That it’s possible for a fairly-shuffled deck of cards to lead to an “unwinnable” game of FlipFlop Solitaire means the author must have necessarily had some mechanism to differentiate between “winnable” (which are probably the majority) and “unwinnable” ones. And therein lies an interesting problem.

If the only way to conclusively prove that a particular deal is “winnable” is to win it, then the developer must have had an algorithm that they were using to test that a given deal was “winnable”: that is – a brute-force solver.

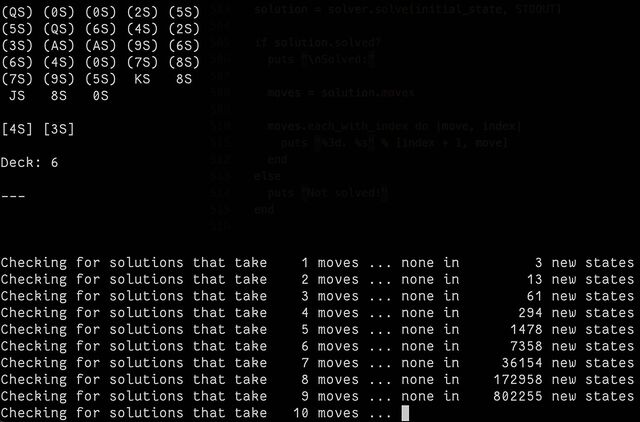

So I had a go at making one3. The code is pretty hacky (don’t judge me) and, well… it takes a long, long time.

Partially that’s because the underlying state engine I used, BfsBruteForce, is a breadth-first optimising algorithm. It aims to find the absolute fewest-moves solution, which isn’t necessarily the fastest one to find because it means that it has to try all of the “probably stupid” moves it finds4 with the same priority as the the “probably smart” moves5.

I played about with enhancing it with some heuristics, and scoring different play states, and then running that in a pruned depth-first way, but it didn’t really help much. It came to an answer eventually, sure… but it took a long, long time, and it’s pretty easy to see why: the number of permutations for a shuffled deck of cards is greater than the number of atoms on Earth!

If you pull off a genuinely random shuffle, then – statistically-speaking – you’ve probably managed to put that deck into an order that no deck of cards has never been in before!6

And sure: the rules of the game reduce the number of possibilities quite considerably… but there’s still a lot of them.

So how are “guaranteed winnable” decks generated?

I think I’ve worked out the answer to this question: it came to me in a dream!

The trick to generating “guaranteed winnable” decks for FlipFlop Solitaire (and, probably, any similar game) is to work backwards.

Instead of starting with a random deck and checking if it’s solvable by performing every permutation of valid moves… start with a “solved” deck (with all the cards stacked up neatly) and perform a randomly-selected series of valid reverse-moves! E.g.:

- The first move is obvious: take one of the kings off the “finished” piles and put it into a column.

- For the next move, you’ll either take a different king and do the same thing, or take the queen that was exposed from under the first king and place it either in an empty column or atop the first king (optionally, but probably not, flipping the king face down).

- With each subsequent move, you determine what the valid next-reverse-moves are, choose one at random (possibly with some kind of weighting), and move on!

In computational complexity theory, you just transformed an NP-Hard problem7 into a P problem.

Once you eliminate repeat states and weight the randomiser to gently favour moving “towards” a solution that leaves the cards set-up and ready to begin the game, you’ve created a problem that may take an indeterminate amount of time… but it’ll be finite and its complexity will scale linearly. And that’s a big improvement.

I started implementing a puzzle-creator that works in this manner, but the task wasn’t as interesting as the near-impossible brute-force solver so I gave up, got distracted, and wrote some even more-pointless code instead.

If you go ahead and make an open source FlipFlop deck generator, let me know: I’d be interested to play with it!

Footnotes

1 I don’t get much time to play videogames, nowadays, but I sometimes find that I’ve got time for a round or two of a simple “droppable” puzzle game while I’m waiting for a child to come out of school or similar. FlipFlop Solitaire is one of only three games I have installed on my phone for this purpose, the other two – both much less frequently-played – being Battle of Polytopia and the buggy-but-enjoyable digital version of Twilight Struggle.

2 Okay, it feels slightly frustrating when you make a series of choices that are perfectly logical and the most-rational decision under the circumstances. But the game has an “undo” button, so it’s not that bad.

3 Mostly I was interested in whether such a thing was possible, but folks who’re familiar with how much I enjoy “cheating” at puzzles (don’t get me started on Jigidi jigsaws…) by writing software to do them for me will also realise that this is Just What I Am Like.

4 An example of a “probably stupid” move would be splitting a same-suit stack in order to sit it atop a card of a different suit, when this doesn’t immediately expose any new moves. Sometimes – just sometimes – this is an optimal strategy, but normally it’s a pretty bad idea.

5 Moving a card that can go into the completed stacks at the top is usually a good idea… although just sometimes, and especially in complex mid-game multi-suit scenarios, it can be beneficial to keep a card in play so that you can use it as an anchor for something else, thereby unblocking more flexible play down the line.

6 Fun fact: shuffling a deck of cards is a sufficient source of entropy that you can use it to generate cryptographic keystreams, as Bruce Schneier demonstrated in 1999.

7 I’ve not thought deeply about it, but determining if a given deck of cards will result in a winnable game probably lies somewhere between the travelling salesman and the halting problem, in terms of complexity, right? And probably not something a right-thinking person would ask their desktop computer to do for fun!

Chicory House, Real Coffee, Flooded Keyboard

Chicory, Coffee, and Code

Now that we’ve finished our move into the Chicory House, I have for the first time in over two months been able to set up my preferred coding environment… with a proper monitor on a proper desk with a proper office chair. Bliss!

A Random List of Silly Things I Hate

So apparently now this is a thing, so here I go:

- Websites that are just blank pages if the JavaScript doesn’t load from the CDN.1

- The misunderstanding that LLMs can somehow be a route to AGI.

- Computer systems that say my name is too short or my password is too long.2

- People being unwilling to discuss their wild claims later using the lack of discussion as evidence of widespread acceptance.

- When people balance the new toilet roll one atop the old one’s tube.3

- Shellfish. Why would you eat that!?

- People assuming my interest in computers and technology means I want to talk to them about cryptocurrencies.4

- Websites that nag you to install their shitty app. (I know you have an app. I’m choosing to use your website. Stop with the banners!)

- People who seem to only be able to drive at one speed.5

- The assumption that the fact I’m “sharing” my partner is some kind of compromise on my part; a concession; something that I’d “wish away” if I could. (It’s very much not.)

- Brexit.

Wow, that was strangely cathartic.

Footnotes

1 I have a special pet hate for websites that require JavaScript to render their images.

Like… we’d had the <img> tag since 1993! Why are you throwing it away and replacing it with something objectively slower, more-brittle, and

less-accessible?

2 Or, worse yet, claiming that my long, random password is insecure because it contains my surname. I get that composition-based password rules, while terrible (even when they’re correctly implemented, which they’re often not), are a moderately useful model for people to whom you’d otherwise struggle to explain password complexity. I get that a password composed entirely of personal information about the owner is a bad idea too. But there’s a correct way to do this, and it’s not “ban passwords with forbidden words in them”. Here’s what you should do: first, strip any forbidden words from the password: you might need to make multiple passes. Second, validate the resulting password against your composition rules. If it fails, then yes: the password isn’t good enough. If it passes, then it doesn’t matter that forbidden words were in it: a properly-stored and used password is never made less-secure by the addition of extra information into it!

3 This is the worst of the toilet paper crimes, but there’s a lesser but more-common offence.

4 Also: I’m uninterested in whatever multiplayer shooter game you’re playing, and no I won’t fix your printer.

5 “You were doing 35mph in the 60mph limit, then you were doing 35mph in the 40mph limit, now you’re doing 35mph in the 20mph limit. Argh!”

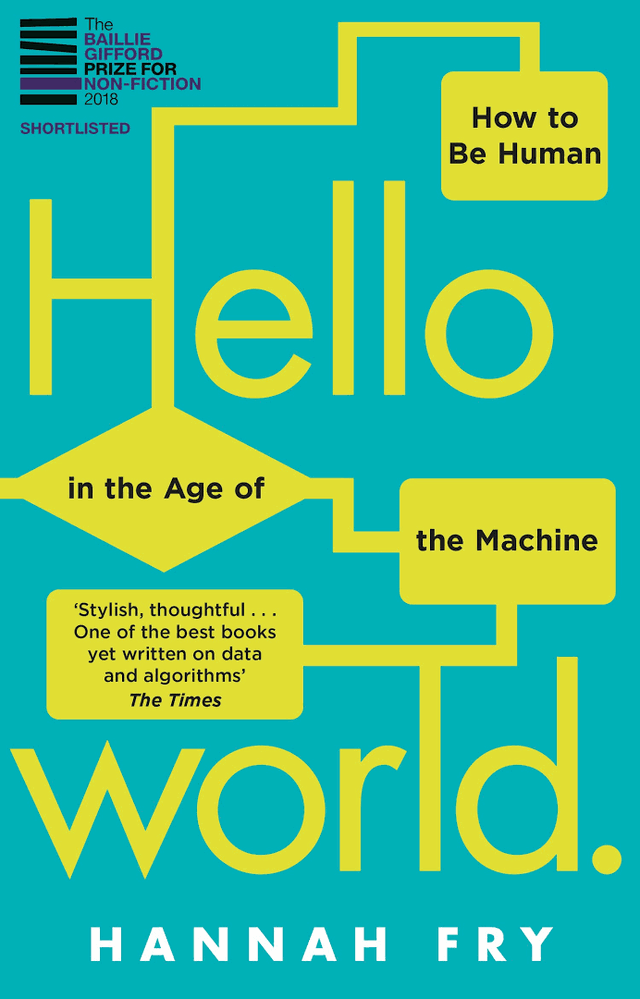

Hello World by Hannah Fry

I’m not certain, but I think that I won my copy of Hello World: How to Be Human in the Age of the Machine at an Oxford Geek Nights event, after I was

first and fastest to correctly identify a photograph of Stanislav Petrov shown by the speaker.

I’m not certain, but I think that I won my copy of Hello World: How to Be Human in the Age of the Machine at an Oxford Geek Nights event, after I was

first and fastest to correctly identify a photograph of Stanislav Petrov shown by the speaker.

Despite being written a few years before the popularisation of GenAI, the book’s remarkably prescient on the kinds of big data and opaque decision-making issues that are now hitting the popular press. I suppose one might argue that these issues were always significant. (And by that point, one might observe that GenAI isn’t living up to its promises…)

Fry spins an engaging and well-articulated series of themed topics. If you didn’t already have a healthy concern about public money spending and policy planning being powered by the output of proprietary algorithms, you’ll certainly finish the book that way.

One of my favourite of Fry’s (many) excellent observations is buried in a footnote in the conclusion, where she describes what she called the “magic test”:

There’s a trick you can use to spot the junk algorithms. I like to call it the Magic Test. Whenever you see a story about an algorithm, see if you can swap out any of the buzzwords, like ‘machine learning’, ‘artificial intelligence’ and ‘neural network’, and swap in the word ‘magic’. Does everything still make grammatical sense? Is any of the meaning lost? If not, I’d be worried that it’s all nonsense. Because I’m afraid – long into the foreseeable future – we’re not going to ‘solve world hunger with magic’ or ‘use magic to write the perfect screenplay’ any more than we are with AI.

That’s a fantastic approach to spotting bullshit technical claims, and I’m totally going to be using it.

Anyway: this was a wonderful read and I only regret that it took me a few years to get around to it! But fortunately, it’s as relevant today as it was the day it was released.

Bee

As part of my efforts to reclaim the living room from the children, I’m building a new gaming PC for the playroom. She’s called Bee, and – thanks to the absolute insanity that is The Tower 300 case from Thermaltake – she’s one of the most bonkers PC cases I’ve ever worked in.

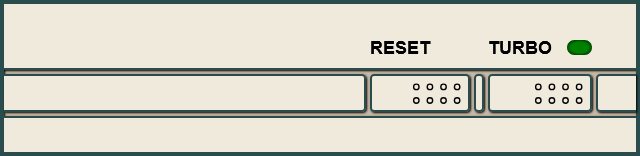

Beige Buttons

Back before PCs were black, they were beige. And even further back, they’d have not only “Reset” and “Power” buttons, but also a “Turbo” button.

I’m not here to tell you what it did1. No, I’m here to show you how to re-live those glory days with a Turbo button of your very own, implemented as a reusable Web Component that you can install on your very own website:

(Don’t press the Reset button; other people are using this website!)

If you’d like some beige buttons of your own, you can get them at Beige-Buttons.DanQ.dev. Two lines of code and you can pop them on any website you like. Also, it’s open-source under the Unlicense so you can take it, break it, or do what you like with it.

I’ve been slumming it in some Web Revivalist circles lately, and it might show. Best Resolution (with all its 88×31s2), which I launched last month, for example.

You might anticipate seeing more retro fun-and-weird going on here. You might be right.

Now… be honest – how many of you pressed the “reset” button even though you were told not to?

Footnotes

1 If you know, you know. And if you don’t, then take a look at the website for my new web component, which has an “about” section.

2 I guess that’s another “if you know, you know”, but at least you’ll get fewer conflicting answers if you search for an explanation than you will if you try to understand the turbo button.

Dan Has Too Many Monitors

My new employer sent me a laptop and a monitor, which I immediately added to my already pretty-heavily-loaded desk. Wanna see?

DB13W3

I’d like to nominate DB13W3 for Most Cursed Connector. I mean, just look at that thing!

Bonus: there were at least two different, incompatible, pinout “standards” for this thing, so there was no guarantee that a random monitor with this cable would connect to your workstation, even if it had the right port.

3Camp 2025

I’m off for a week of full-time volunteering with Three Rings at 3Camp, our annual volunteer hack week: bringing together our distributed team for some intensive in-person time, working to make life better for charities around the world.

And if there’s one good thing to come out of me being suddenly and unexpectedly laid-off two days ago, it’s that I’ve got a shiny new laptop to do my voluntary work on (Automattic have said that I can keep it).

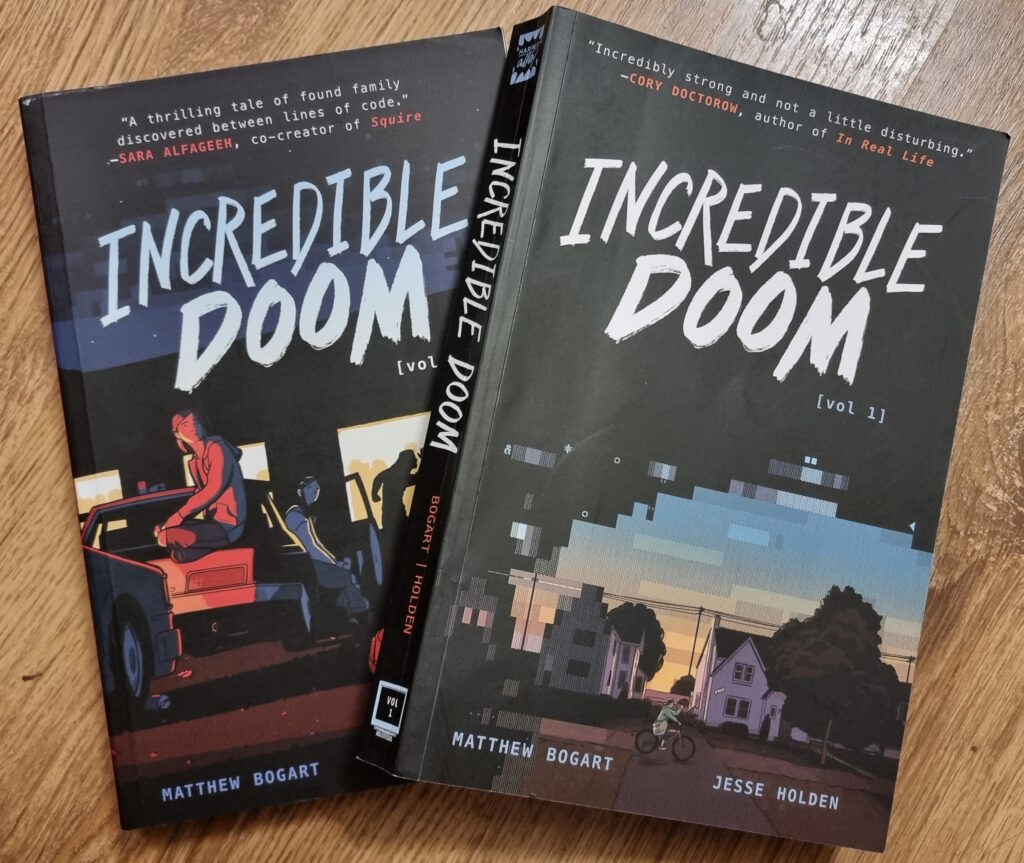

Incredible Doom

I just finished reading Incredible Doom volumes 1 and 2, by Matthew Bogart and Jesse Holden, and man… that was a heartwarming and nostalgic tale!

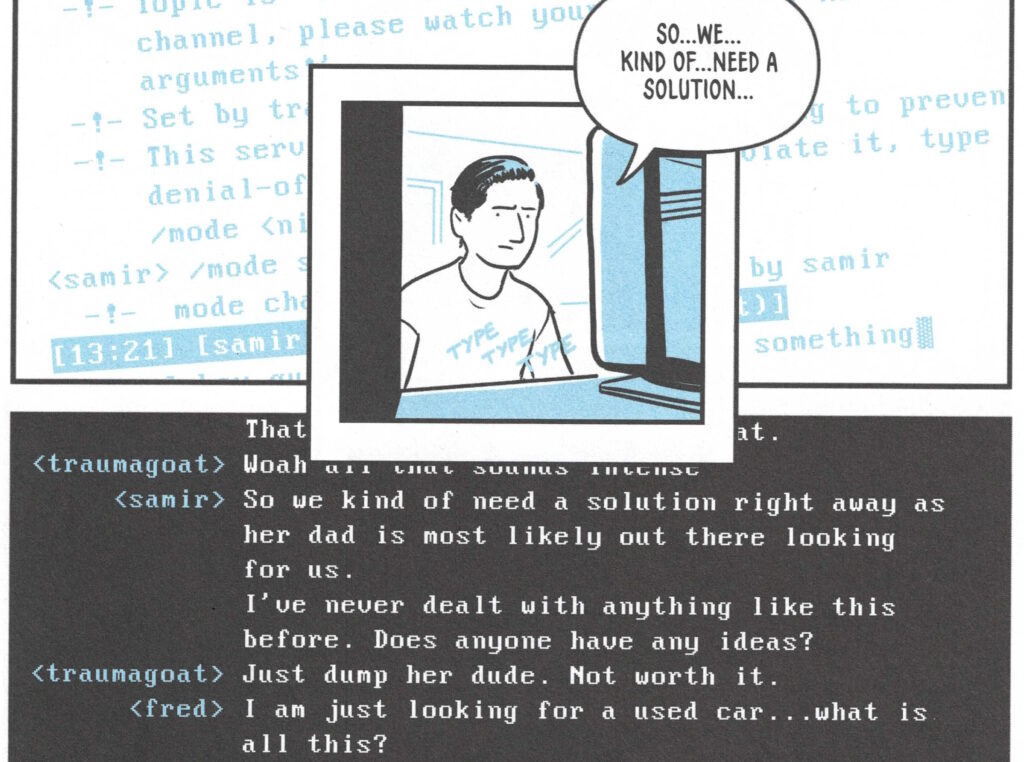

Set in the early-to-mid-1990s world in which the BBS is still alive and kicking, and the Internet’s gaining traction but still lacks the “killer app” that will someday be the Web (which is still new and not widely-available), the story follows a handful of teenagers trying to find their place in the world. Meeting one another in the 90s explosion of cyberspace, they find online communities that provide connections that they’re unable to make out in meatspace.

It touches on experiences of 90s cyberspace that, for many of us, were very definitely real. And while my online “scene” at around the time that the story is set might have been different from that of the protagonists, there’s enough of an overlap that it felt startlingly real and believable. The online world in which I – like the characters in the story – hung out… but which occupied a strange limbo-space: both anonymous and separate from the real world but also interpersonal and authentic; a frontier in which we were still working out the rules but within which we still found common bonds and ideals.

Anyway, this is all a long-winded way of saying that Incredible Doom is a lot of fun and if it sounds like your cup of tea, you should read it.

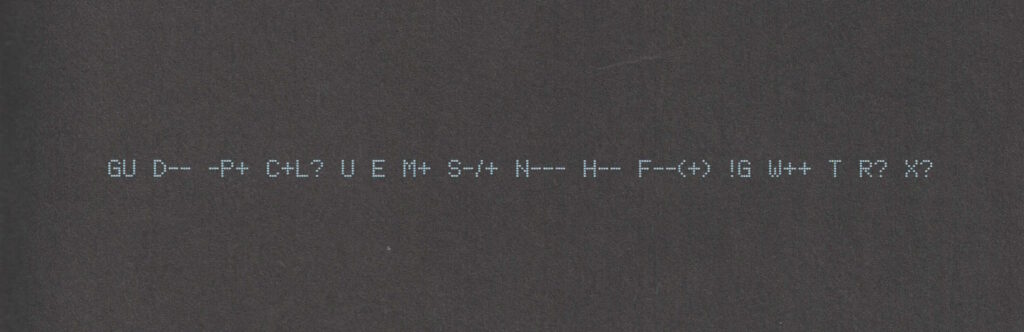

Also: shortly after putting the second volume down, I ended up updating my Geek Code for the first time in… ooh, well over a decade. The standards have moved on a little (not entirely in a good way, I feel; also they’ve diverged somewhat), but here’s my attempt:

----- BEGIN GEEK CODE VERSION 6.0 ----- GCS^$/SS^/FS^>AT A++ B+:+:_:+:_ C-(--) D:+ CM+++ MW+++>++ ULD++ MC+ LRu+>++/js+/php+/sql+/bash/go/j/P/py-/!vb PGP++ G:Dan-Q E H+ PS++ PE++ TBG/FF+/RM+ RPG++ BK+>++ K!D/X+ R@ he/him! ----- END GEEK CODE VERSION 6.0 -----

Footnotes

1 I was amazed to discover that I could still remember most of my Geek Code syntax and only had to look up a few components to refresh my memory.

My First MP3

Podcast Version

This post is also available as a podcast. Listen here, download for later, or subscribe wherever you consume podcasts.

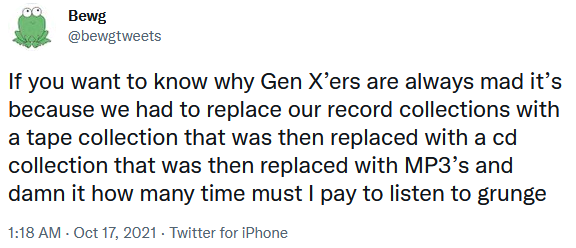

Somebody shared with me a tweet about the tragedy of being a Gen X’er and having to buy all your music again and again as formats evolve. Somebody else shared with me Kyla La Grange‘s cover of a particular song .Together… these reminded me that I’ve never told you the story of my first MP3…1

In the Summer of 1995 I bought the CD single of the (still excellent!) Set You Free by N-Trance.2 I’d heard about this new-fangled “MP3” audio format, so soon afterwards I decided to rip a copy of the song to my PC.

I was using a 66MHz 486SX CPU, and without an embedded FPU I didn’t

quite have the spare processing power to rip-and-encode in a single pass.3

So instead I first ripped to an uncompressed PCM .wav file and then performed the encoding: the former step

was done almost in real-time (I listened to the track as it ripped!), about 7 minutes. The latter step took about 20 minutes.

So… about half an hour in total, to rip a single song.

Creating a (what would now be considered an apalling) 32kHz mono-channel file, this meant that I briefly stored both a 27MB wave file and the final ~4MB MP3 file. 31MB might not sound huge, but I only had a total of 145MB of hard drive space at the time, so 31MB consumed over a fifth of my entire fixed storage! Even after deleting the intermediary wave file I was left with a single song consuming around 3% of my space, which is mind-boggling to think about in hindsight.

But it felt like magic. I called my friend Gary to tell him about it. “This is going to be massive!” I said. At the time, I meant for techy people: I could imagine a future in which, with more hard drive space, I’d keep all my music this way… or else bundle entire artists onto writable CDs in this new format, making albums obsolete. I never considered that over the coming decade or so the format would enter the public consciousness, let alone that it’d take off like it did.

The MP3 file I produced had a fault. Most of the way through the encoding process, I got bored and ran another program, and this must’ve interfered with the stream because there was an audible “blip” noise about 30 seconds from the end of the track. You’d have to be listening carefully to hear it, or else know what you were looking for, but it was there. I didn’t want to go through the whole process again, so I left it.

But that artefact uniquely identified that copy of what was, in the end, a popular song to have in your digital music collection. As the years went by and I traded MP3 files in bulk at LAN parties or on CD-Rs or, on at least one ocassion, on an Iomega Zip disk (remember those?), I’d ocassionally see

N-Trance - (Only Love Can) Set You Free.mp34 being passed around and play it, to see if it was “my”

copy.

Sometimes the ID3 tags had been changed because for example the previous owner had decided it deserved to be considered Genre: Dance instead of Genre: Trance5. But I could still identify that file because

of the audio fingerprint, distinct to the first MP3 I ever created.

I still had that file when I went to university (where it occupied a smaller proportion of my hard drive space) and hearing that distinctive “blip” would remind me about the ordeal that was involved in its creation. I don’t have it any more, but perhaps somebody else still does.

Footnotes

1 I might never have told this story on my blog, but eagle-eyed readers may remember that I’ve certainly hinted at it before now.

2 Rewatching that music video, I’m struck by a recollection of how crazy popular crossfades were on 1990s dance music videos. More than just a transition, I’m pretty sure that most of the frames of that video are mid-crossfade: it feels like I’m watching Kelly Llorenna hanging out of a sunroof but I accidentally left one of my eyeballs in a smoky nightclub and can still see out of it as well.

3 I initially tried to convert directly from red book format to an MP3 file, but the encoding process was too slow and the CD drive’s buffer filled up and didn’t get drained by the processor, which was still presumably bogged down with framing or fourier-transforming earlier parts of the track. The CD drive reasonably assumed that it wasn’t actually being used and spun-down the drive motor, and this caused it to lose its place in the track, killing the whole process and leaving me with about a 40 second recording.

4 Yes, that filename isn’t quite the correct title. I was wrong.

5 No, it’s clearly trance. They were wrong.

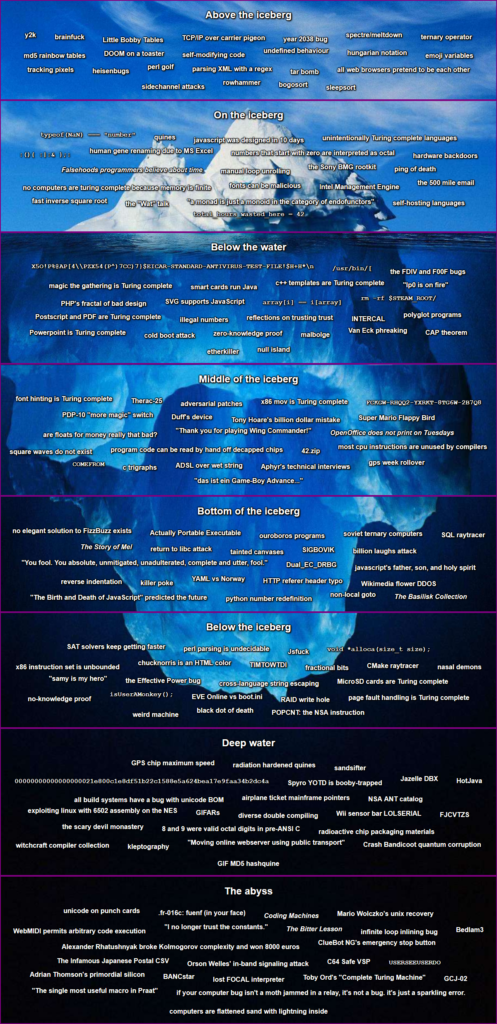

The Cursed Computer Iceberg Meme

This is a repost promoting content originally published elsewhere. See more things Dan's reposted.

More awesome from Blackle Mori, whose praises I sung recently over The Basilisk Collection. This time we’re treated to a curated list of 182 articles demonstrating the “peculiarities and weirdness” of computers. Starting from relatively well-known memes like little Bobby Tables, the year 2038 problem, and how all web browsers pretend to be each other, we descend through the fast inverse square root (made famous by Quake III), falsehoods programmers believe about time (personally I’m more of a fan of …names, but then you might expect that), the EICAR test file, the “thank you for playing Wing Commander” EMM386 in-memory hack, The Basilisk Collection itself, and the GIF MD5 hashquine (which I’ve shared previously) before eventually reaching the esoteric depths of posuto and the nightmare that is Japanese postcodes…

Plus many, many things that were new to me and that I’ve loved learning about these last few days.

It’s definitely not a competition; it’s a learning opportunity wrapped up in the weirdest bits of the field. Have an explore and feed your inner computer science geek.

Note #17583

Needed new UPS batteries.

Almost bought from @insight_uk but they require registration to checkout.

Purchased from @SourceUPSLtd instead.

Moral: having no “guest checkout” costs you business.