I’ve been watching the output that people machines around the Internet have been producing using GPT-3 (and its cousins), an AI model that can produce long-form “human-like”

text. Here’s some things I’ve enjoyed recently:

I played for a bit with AI Dungeon‘s (premium) Dragon engine, which came up with Dan and the Spider’s Curse when used as a virtual DM/GM. I pitched an idea to Robin lately that one could run a vlog series based on AI Dungeon-generated adventures: coming up with a “scene”, performing it, publishing it, and taking suggestions via the comments for the direction in which the adventure might go next (but leaving the AI to do the real writing).

Today is Spaceship Day is a Plotagon-powered machinama based on a script written by Botnik‘s AI. So not technically GPT-3 if you’re being picky but still amusing to how and what the AI‘s creative mind has come up with.

The holy founding text of The Church of the Next Word, as revealed to Frank Lantz takes the idea in a different direction. Republished on his blog by Matt Webb (because who wants to read text, in an image, in a Tweet?), it represents an attempt to establish the tenets of a new religion, as imagined by GPT-3. The seventh principle of Nextwordianism is especially profound:

Language contains the map to a better world. Those that are most skilled at removing obstacles, misdirection, and lies from language, that reveal the maps that are hidden within, are the guides that will lead us to happiness.

Yesterday, The Guardian published the op-ed piece A robot wrote this entire article. Are you scared yet, human? It’s edited together from half a dozen or so essays produced by the AI from the same starting prompt, but the editor insists that this took less time than the editing process on most human-authored op-eds. It’s good stuff. I found myself reminded of Nobody Knows You’re A Machine, a short story I wrote about eight years ago and was never entirely happy with but which I’ve put online in order to allow you to see for yourself what I mean.

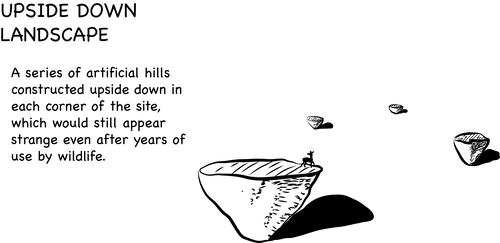

But my favourite so far must be GPT-3’s attempt to write its own version of Expert judgment on markers to deter inadvertent human intrusion into the Waste Isolation Pilot Plant, which occasionally circulates the Internet retitled with its line This place is not a place of honor…no highly esteemed deed is commemorated here… nothing valued is here. The original document was a report into how humans might mark a nuclear waste disposal site in order to discourage deliberate or accidental tampering with the waste stored there: a massive challenge, given that the waste will remain dangerous for many thousands of years! The original paper’s worth a read, of course, but mostly as a preface to reading a post by Janelle Shane (whose work I’ve mentioned before) about teaching GPT-3 to write nuclear waste site area denial strategies. It’s pretty special.

As effective conversational AI becomes increasingly accessible, I become increasingly convinced what we might eventually see a sandwichware future, where it’s cheaper for an appliance developer to install an AI into the device (to allow it to learn how to communicate with your other appliances, in a human language, just like you will) rather than rely on a static and universal underlying computer protocol as an API. Time will tell.

Meanwhile: I promise that this post was written by a human!

0 comments