…

5. If you use AI, you are the one who is accountable for whatever you produce with it. You have to be certain that whatever you produced was correct. You cannot ask the system itself to do this. You must either already be expert at the task you are doing so you can recognise good output yourself, or you must check through other, different means the validity of any output.

…

9. Generative AI produces above average human output, but typically not top human output. If you overuse generative AI you may produce more mediocre output than you are capable of.

…

I was also tempted to include in 9 as a middle sentence “Note that if you are in an elite context, like attending a university, above average for humanity widely could be below average for your context.”

…

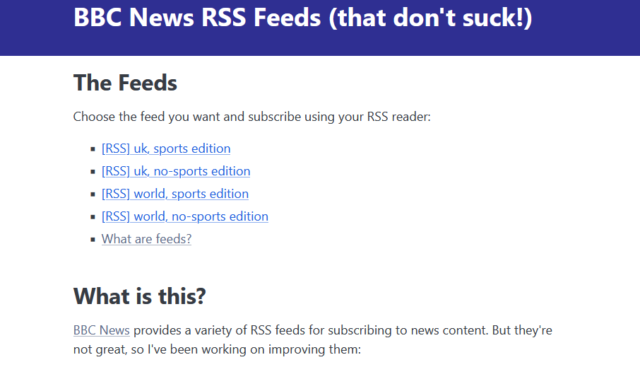

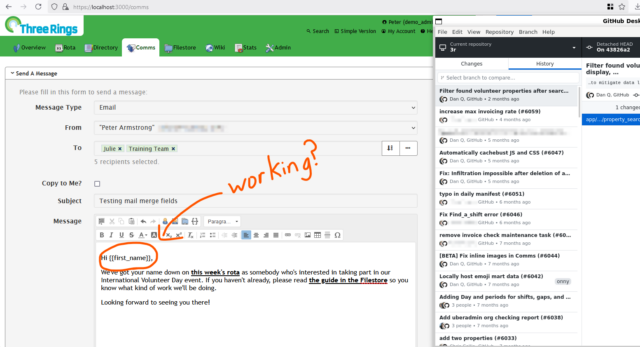

In this excellent post, Joanna says more-succinctly what I was trying to say in my comments on “AI Is Reshaping Software Engineering — But There’s a Catch…” a few days ago. In my case, I was talking very-specifically about AI as a programmer’s assistant, and Joanna’s points 5. and 9. are absolutely spot on.

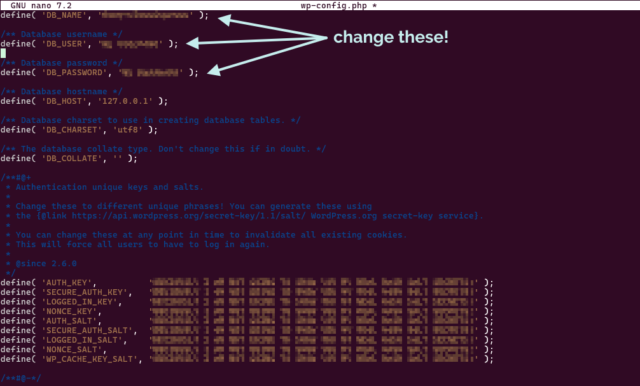

Point 5 is a reminder that, as I’ve long said, you can’t trust an AI to do anything that you can’t do for yourself. I sometimes use a GenAI-based programming assistant, and I can tell you this – it’s really good for:

- Fancy autocomplete: I start typing a function name, it guesses which variables I’m going to be passing into the function or that I’m going to want to loop through the output or that I’m going to want to return-early f the result it false. And it’s usually right. This is smart, and it saves me keypresses and reduces the embarrassment of mis-spelling a variable name1.

-

Quick reference guide: There was a time when I had all of my PHP

DateTimeInterface::formatcharacter codes memorised. Now I’d have to look them up. Or I can write a comment (which I should anyway, for the next human) that says something like// @returns String a date in the form: Mon 7th January 2023and when I get to mydate(...)statement the AI will already have worked out that the format is'D jS F Y'for me. I’ll recognise a valid format when I see it, and I’ll be testing it anyway. - Boilerplate: Sometimes I have to work in languages that are… unnecessarily verbose. Rather than writing a stack of setters and getters, or laying out a repetitive tree of HTML elements, or writing a series of data manipulations that are all subtly-different from one another in ways that are obvious once they’ve been explained to you… I can just outsource that and then check it2.

- Common refactoring practices: “Rewrite this Javascript function so it doesn’t use jQuery any more” is a great example of the kind of request you can throw at an LLM. It’s already ingested, I guess, everything it could find on StackOverflow and Reddit and wherever else people go to bemoan being stuck with jQuery in their legacy codebase. It’s not perfect – just like when it’s boilerplating – and will make stupid mistakes3 but when you’re talking about a big function it can provide a great starting point so long as you keep the original code alongside, too, to ensure it’s not removing any functionality!

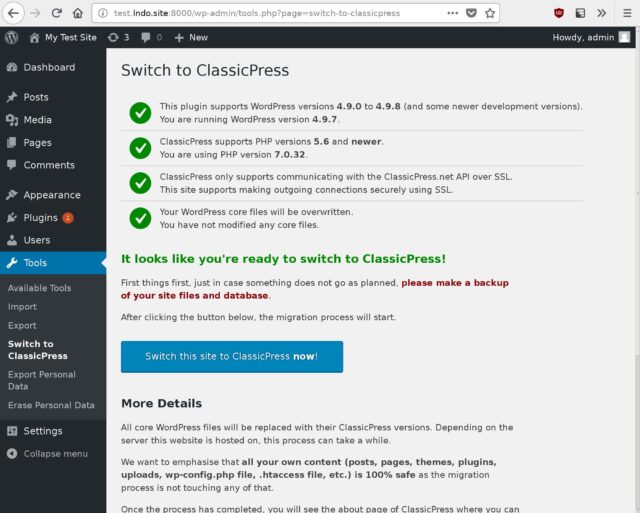

Other things… not so much. The other day I experimentally tried to have a GenAI help me to boilerplate some unit tests and it really failed at it. It determined pretty quickly, as I had, that to test a particular piece of functionality need to mock a function provided by a standard library, but despite nearly a dozen attempts to do so, with copious prompting assistance, it couldn’t come up with a working solution.

Overall, as a result of that experiment, I was less-effective as a developer while working on that unit test than I would have been had I not tried to get AI assistance: once I dived deep into the documentation (and eventually the source code) of the underlying library I was able to come up with a mocking solution that worked, and I can see why the AI failed: it’s quite-possibly never come across anything quite like this particular problem in its training set.

Solving it required a level of creativity and a depth of research that it was simply incapable of, and I’d clearly made a mistake in trying to outsource the problem to it. I was able to work around it because I can solve that problem.

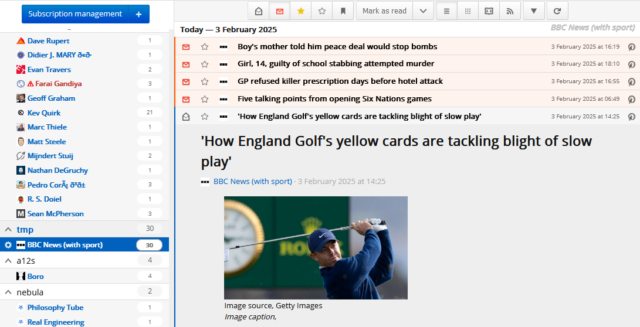

But I know people who’ve used GenAI to program things that they wouldn’t be able to do for themselves, and that scares me. If you don’t understand the code your tool has written, how can you know that it does what you intended? Most developers have a blind spot for testing and will happy-path test their code without noticing if they’ve introduced, say, a security vulnerability owing to their handling of unescaped input or similar… and that’s a problem that gets much, much worse when a “developer” doesn’t even look at the code they deploy.

Security, accessibility, maintainability and performance – among others, I’ve no doubt – are all hard problems that are not made easier when you use an AI to write code that you don’t understand.

Footnotes

1 I’ve 100% had an occasion when I’ve called something $theUserID in one

place and then $theUserId in another and not noticed the case difference until I’m debugging and swearing at the computer

2 I’ve described the experience of using an LLM in this way as being a little like having a very-knowledgeable but very-inexperienced junior developer sat next to me to whom I can pass off the boring tasks, so long as I make sure to check their work because they’re so eager-to-please that they’ll choose to assume they know more than they do if they think it’ll briefly impress you.

3 e.g. switching a selector from $(...) to

document.querySelector but then failing to switch the trailing .addClass(...) to .classList.add(...)– you know: like an underexperienced but

eager-to-please dev!

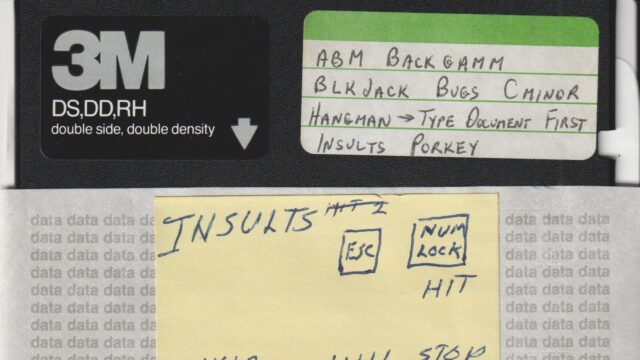

![Comic comparing 'Devs Then' to 'Devs Now'. The 'Devs Then' are illustrated as muscular men, with captions 'Writes code without AI or Stack Overflow', 'Builds entire games in Assembly', 'Crafts mission-critical code fo [sic] Moon landing', and 'Fixes memory leaks by tweaking pointers'. The 'Devs Now' are illustrated with badly-drawn, somewhat-stupid-looking faces and captioned 'Googles how to center a div in 2025?', 'ChatGPT please fix my syntax error', 'Cannot exit vim', and 'Fixes one bug, creates three new ones'.](https://bcdn.danq.me/_q23u/2025/02/devs-then-devs-now-lieo-640x601.jpg)