I woke up with this in my head and had to draw it.

Tag: comic

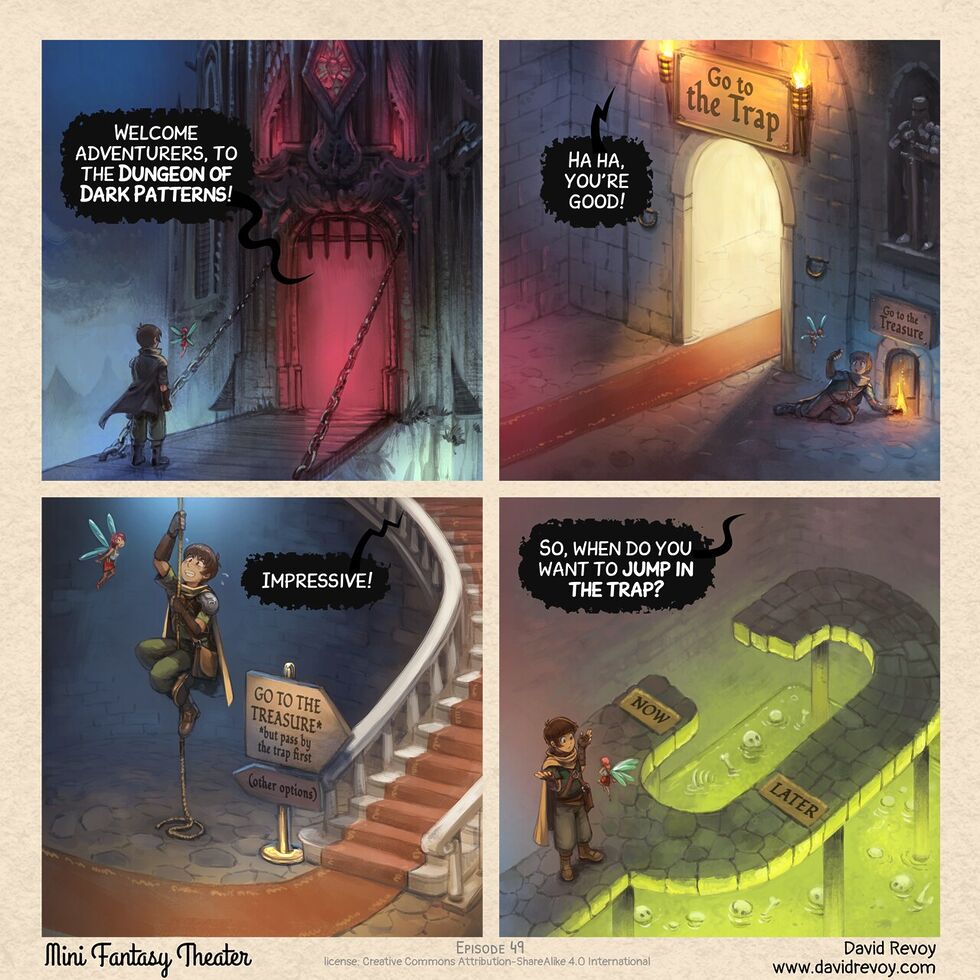

The Dungeon of Dark Patterns

This is a repost promoting content originally published elsewhere. See more things Dan's reposted.

Well this is just excellent.

I’d not come across David Revoy before today, but he’s apparently being doing art and comics since 2014. The Mini Fantasy Theatre series started a couple of years ago, but is totally getting added to my RSS reader. Almost everything’s bilingual English/French too, if that’s something that interests you.

Navigating around the dark patterns of modern UX certainly feels like a dungeon delve, sometimes. Now we just need the episode in which the adventurer has difficulty unsubscribing from requests from their patron…

MegaConLive London

My 12-year-old’s persuaded me to take her to MegaConLive London this weekend.

As somebody who doesn’t pay much attention to the pop culture circles represented by such an event (and hasn’t for 15+ years, or whenever it was that Asdfbook came out?)… have you got any advice for me, Internet?

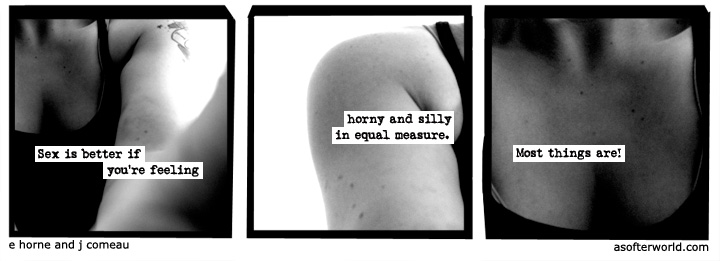

Horny and Silly

This year it’ll be 10 years since webcomic A Softer World ended its 12-year run. If you missed it, you can still go back and read them all, starting from asofterworld.com/index.php?id=1

But in the meantime, here’s one of my very favourites:

AI Is Reshaping Software Engineering — But There’s a Catch…

This is a repost promoting content originally published elsewhere. See more things Dan's reposted.

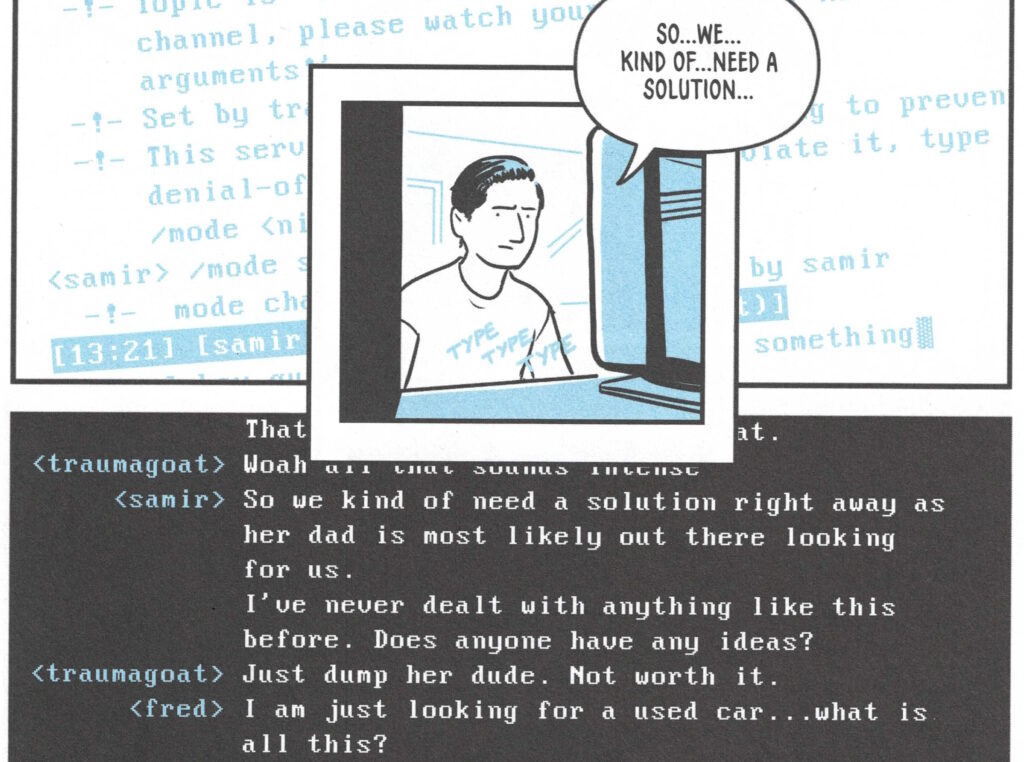

I don’t believe AI will replace software developers, but it will exponentially boost their productivity. The more I talk to developers, the more I hear the same thing—they’re now accomplishing in half the time what used to take them days.

But there’s a risk… Less experienced developers often take shortcuts, relying on AI to fix bugs, write code, and even test it—without fully understanding what’s happening under the hood. And the less you understand your code, the harder it becomes to debug, operate, and maintain in the long run.

So while AI is a game-changer for developers, junior engineers must ensure they actually develop the foundational skills—otherwise, they’ll struggle when AI can’t do all the heavy lifting.

Eduardo picks up on something I’ve been concerned about too: that the productivity boost afforded to junior developers by AI does not provide them with the necessary experience to be able to continue to advance their skills. GenAI for developers can be a dead end, from a personal development perspective.

That’s a phenomenon not unique to AI, mind. The drive to have more developers be more productive on day one has for many years lead to an increase in developers who are hyper-focused on a very specific, narrow technology to the exclusion even of the fundamentals that underpin them.

When somebody learns how to be a “React developer” without understanding enough about HTTP to explain which bits of data exist on the server-side and which are delivered to the client, for example, they’re at risk of introducing security problems. We see this kind of thing a lot!

There’s absolutely nothing wrong with not-knowing-everything, of course (in fact, knowing where the gaps around the edges of your knowledge are and being willing to work to fill them in, over time, is admirable, and everybody should be doing it!). But until they learn, a developer that lacks a comprehension of the fundamentals on which they depend needs to be supported by a team that “fill the gaps” in their knowledge.

AI muddies the water because it appears to fulfil the role of that supportive team. But in reality it’s just regurgitating code synthesised from the fragments it’s read in the past without critically thinking about it. That’s fine if it’s suggesting code that the developer understands, because it’s like… “fancy autocomplete”, which you can accept or reject based on their understanding of the domain. I use AI in exactly this way many times a week. But when people try to use AI to fill the “gaps” at the edge of their knowledge, they neither learn from it nor do they write good code.

I’ve long argued that as an industry, we lack a pedagogical base: we don’t know how to teach people to do what we do (this is evidenced by the relatively high drop-out rate on computer science course, the popular opinion that one requires a particular way of thinking to be a programmer, and the fact that sometimes people who fail to learn programming through paradigm are suddenly able to do so when presented with a different one). I suspect that AI will make this problem worse, not better.

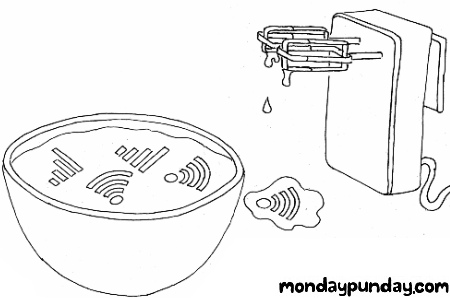

Monday Punday

Have you come across Monday Punday? I only discovered it last year, sadly, after it had been on hiatus for like 4 years, following a near decade-long run, but I figured that if you like wordplay and webcomics as much as I do (e.g. if you enjoyed my Movie Title Mash-Ups, back in the day), then perhaps you’ll dig it too.

I’ve been gradually making my way through the back catalogue, guessing the answers (there’s a form that’ll tell you if you’re right!). I’ve successfully guessed almost half of all of them, now, and it’s been a great journey. It sort-of fills the void that I’d hoped Crimson Herring was going to before it vanished so suddenly.

So if you’re looking for a fresh, probably-finished webcomic that’ll sometimes make you laugh, sometimes make you groan, and often make you think, start by skimming the rules of Monday Punday and then begin the long journey through the ~500 published episodes. You’re welcome!

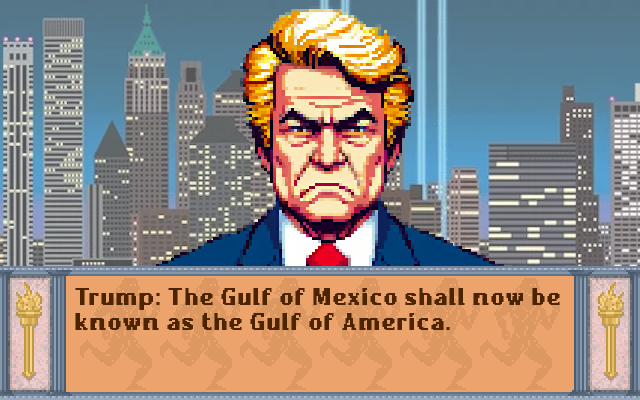

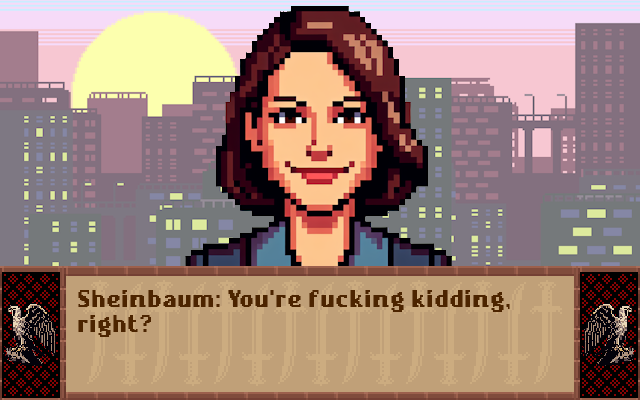

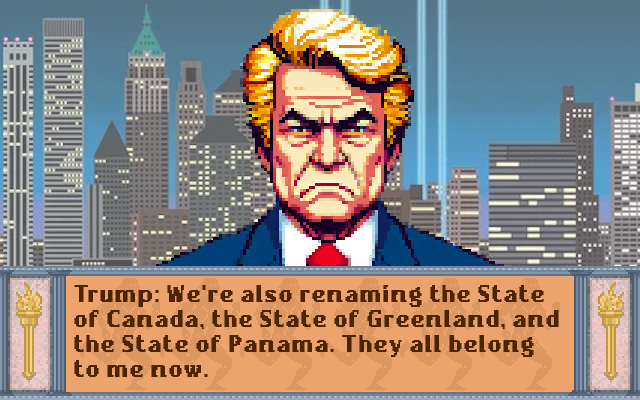

Trump’s Strategy

What do you reckon? Is he trying to go for a domination victory without ever saying “MY THREATS ARE BACKED BY NUCLEAR WEAPONS!”? His track record shows that he’s arrogant enough to think that the strategy of simply renaming things until they’re yours is actually viable!

After I saw Mexico’s response to Google following Trump’s lead in renaming the Gulf of Mexico, this stupid comic literally came to me in a dream.

Adapts screenshots from Sid Meier’s Civilization (1991 DOS version), public domain assets from

OpenGameArt.org, and AI-assisted images of world leaders on account of the fact that if I drew pixel-art world leaders without assistance then

you’d be even less-likely to be able to recognise them.

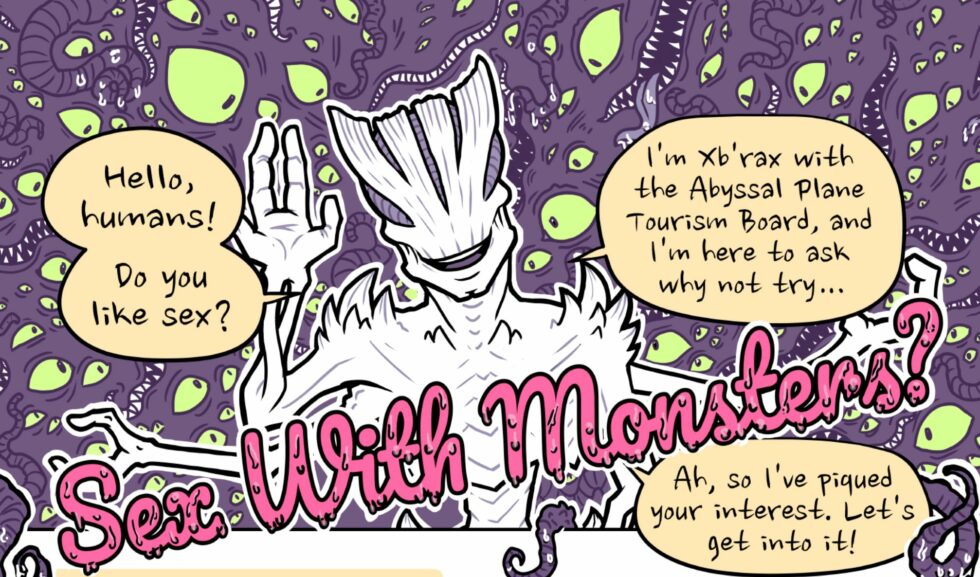

Sex With Monsters

This is a repost promoting content originally published elsewhere. See more things Dan's reposted.

Just in time for Halloween, this comic (published via the ever-excellent Oh Joy Sex Toy) is fundamentally pretty silly… and yet still manages to touch upon important concepts of safer sex, consent, aftercare etc. And apparently, based on Simon’s portfolio, his “thing” might well be that niche but now fun-sounding genre of “queer/monster horror”.

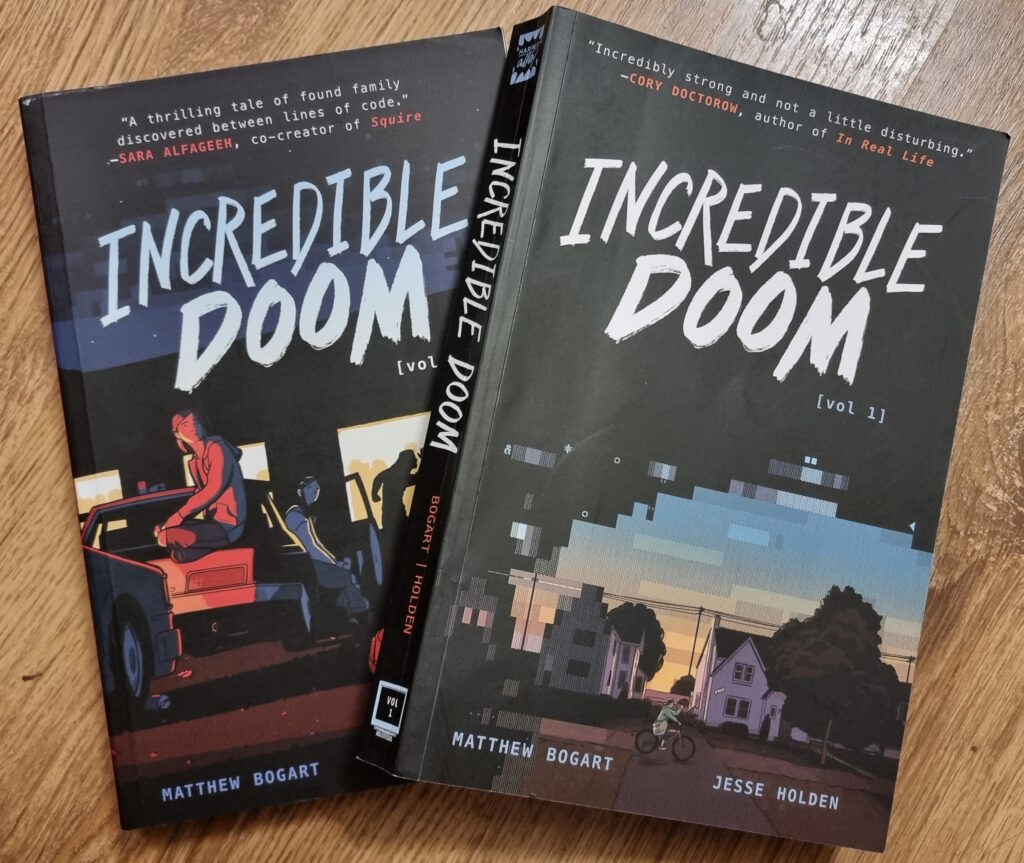

Incredible Doom

I just finished reading Incredible Doom volumes 1 and 2, by Matthew Bogart and Jesse Holden, and man… that was a heartwarming and nostalgic tale!

Set in the early-to-mid-1990s world in which the BBS is still alive and kicking, and the Internet’s gaining traction but still lacks the “killer app” that will someday be the Web (which is still new and not widely-available), the story follows a handful of teenagers trying to find their place in the world. Meeting one another in the 90s explosion of cyberspace, they find online communities that provide connections that they’re unable to make out in meatspace.

It touches on experiences of 90s cyberspace that, for many of us, were very definitely real. And while my online “scene” at around the time that the story is set might have been different from that of the protagonists, there’s enough of an overlap that it felt startlingly real and believable. The online world in which I – like the characters in the story – hung out… but which occupied a strange limbo-space: both anonymous and separate from the real world but also interpersonal and authentic; a frontier in which we were still working out the rules but within which we still found common bonds and ideals.

Anyway, this is all a long-winded way of saying that Incredible Doom is a lot of fun and if it sounds like your cup of tea, you should read it.

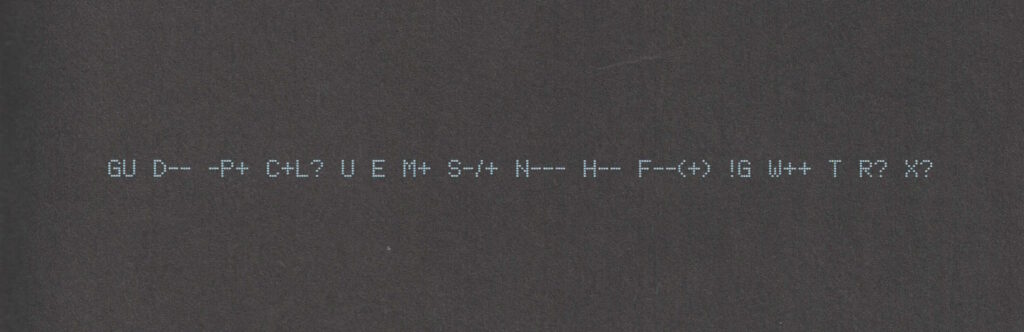

Also: shortly after putting the second volume down, I ended up updating my Geek Code for the first time in… ooh, well over a decade. The standards have moved on a little (not entirely in a good way, I feel; also they’ve diverged somewhat), but here’s my attempt:

----- BEGIN GEEK CODE VERSION 6.0 ----- GCS^$/SS^/FS^>AT A++ B+:+:_:+:_ C-(--) D:+ CM+++ MW+++>++ ULD++ MC+ LRu+>++/js+/php+/sql+/bash/go/j/P/py-/!vb PGP++ G:Dan-Q E H+ PS++ PE++ TBG/FF+/RM+ RPG++ BK+>++ K!D/X+ R@ he/him! ----- END GEEK CODE VERSION 6.0 -----

Footnotes

1 I was amazed to discover that I could still remember most of my Geek Code syntax and only had to look up a few components to refresh my memory.

Groundhog Day

This is a repost promoting content originally published elsewhere. See more things Dan's reposted.

After a break of nine and a half years, webcomic Octopuns is back. I have two thoughts:

- That’s awesome. I love Octopuns and I’m glad it’s back. If you want a quick taster – a quick slice, if you will – of its kind of humour, I suggest starting with Pizza.

- How did I know that Octopuns was back? My RSS reader told me. RSS remains a magical way to keep an eye on what’s happening on the Internet: it’s like a subscription service that delivers you exactly what you want, as soon as it’s available.

I’ve been pleasantly surprised before when my feed reader has identified a creator that’s come back from the dead. I ❤️ FreshRSS.

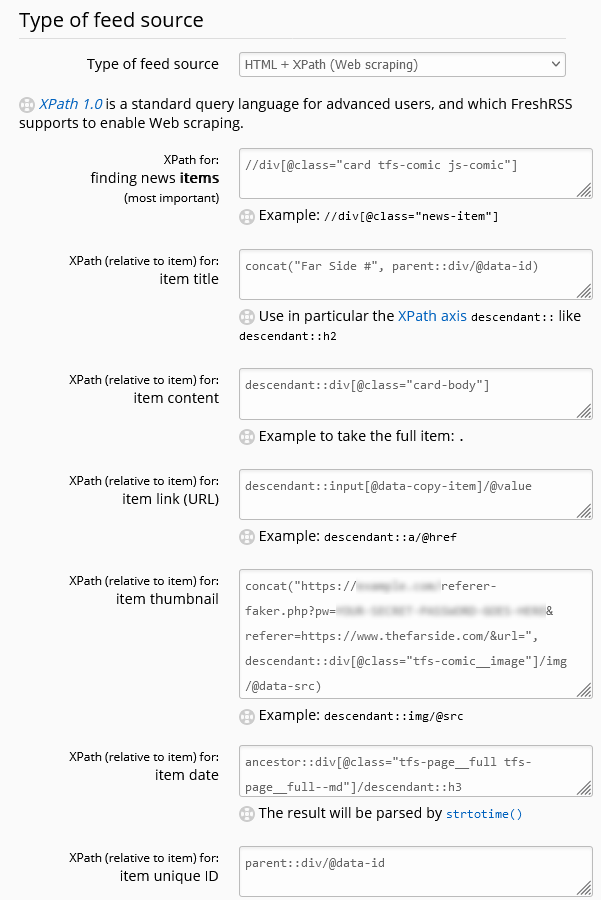

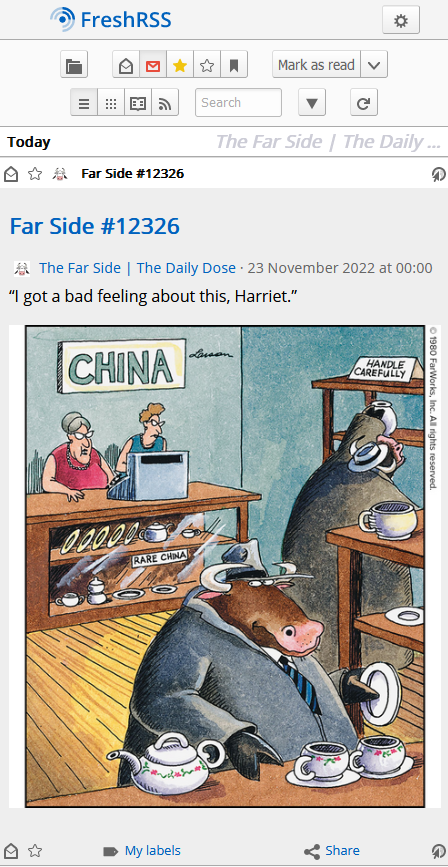

New Far Side in FreshRSS

I got some great feedback to yesterday’s post about using FreshRSS + XPath to subscribe to Forward, including helpful comments from FreshRSS developer Alexandre Alapetite and from somebody who appreciated it and my Far Side “Daily Dose” recipe and wondered if it was possible to get the new Far Side content in FreshRSS too.

Wait, there’s new Far Side content? Yup: it turns out Gary Larson’s dusted off his pen and started drawing again. That’s awesome! But the last thing I want is to have to go to the website once every few… what: days? weeks? months? He’s not syndicated any more so he’s not got a deadline to work to! If only there were some way to have my feed reader, y’know, do it for me and let me know whenever he draws something new.

Here’s my setup for getting Larson’s new funnies right where I want them:

-

Feed URL:

https://www.thefarside.com/new-stuff/1

This isn’t a valid address for any of the new stuff, but always seems to redirect to somewhere that is, so that’s nice. -

XPath for finding news items:

//div[@class="swiper-slide"]

Turns out all the “recent” new stuff gets loaded in the HTML and then JavaScript turns it into a slider etc.; some of the CSS classes change when the JavaScript runs so I needed to View Source rather than use my browser’s inspector to find everything. -

Item title:

concat("Far Side #", descendant::button[@aria-label="Share"]/@data-shareable-item)

Ugh. The easiest place I could find a “clean” comic ID number was in adata-attribute of the “share” button, where it’s presumably used for engagement tracking. Still, whatever works right? -

Item content:

descendant::figcaption

When Larson captions a comic, the caption is important. -

Item link (URL) and item unique ID:

concat("https://www.thefarside.com", ./@data-path)

The URLs work as direct links to the content, and because they’re unique, they make a reasonable unique ID too (so long as their numbering scheme is internally-consistent, this should stop a re-run of new content popping up in your feed reader if the same comic comes around again). -

Item thumbnail:

concat("https://fox.q-t-a.uk/referer-faker.php?pw=YOUR-SECRET-PASSWORD-GOES-HERE&referer=https://www.thefarside.com/&url=", descendant::img[@data-src]/@data-src)

The Far Side usesReferer:headers as an anti-hotlinking measure, which prevents us easily loading the images directly in an RSS reader. I use this tiny PHP script as a proxy to mitigate that. If you don’t have such a proxy set up, you could simply omit the “Item thumbnail” and “Item content” fields and click the link to go to the original page. -

Item date:

normalize-space(descendant::div[@class="tfs-comic-new__meta"]/*[1])

The date is spread through two separate text nodes, so we get the content of their wrapper and usenormalize-spaceto tidy the whitespace up. The date format then looks like “Wednesday, March 29, 2023”, which we can parse using a custom date/time format string: -

Custom date/time format:

l, F j, Y

I promise I’ll stop writing about how awesome FreshRSS + XPath is someday. Today isn’t that day.

Meanwhile: if you used to use a feed reader but gave up when the Web started to become hostile to them and big social media systems started to wall you in, you should really consider picking one up again. The stuff I write about is complex edge-cases that most folks don’t need to think about in order to benefit from RSS… but it’s super convenient to have the things you care about online (news, blogs, social media, videos, newsletters, comics, search trends…) collated and sorted for you… without interference from algorithms that want to push “sticky” content, without invasive tracking or advertisements (or cookie banners or privacy popups), without something “disappearing” simply because you put off reading it for a few days.

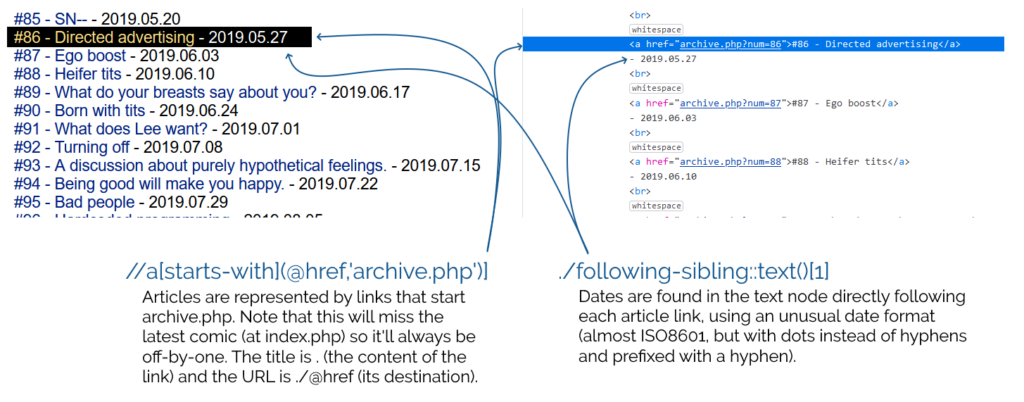

Subscribing to Forward using FreshRSS’s XPath Scraping

As I’ve mentioned before, I’m a fan of Tailsteak‘s Forward comic. I’m not a fan of the author’s weird aversion to RSS, so I hacked a way around it first using an exploit in webcomic reader app Comic Chameleon (accidentally getting access to comics weeks in advance of their publication as a side-effect) and later by using my own tool RSSey.

But now I’m able to use my favourite feed reader FreshRSS to scrape websites directly – like I’ve done for The Far Side – I should switch to using this approach to subscribe to Forward, too:

Here’s the settings I came up with –

-

Feed URL:

http://forwardcomic.com/list.php -

Type of feed source:

HTML + XPath (Web scraping) -

XPath for finding news items:

//a[starts-with(@href,'archive.php')] -

Item title:

. -

Item link (URL):

./@href -

Item date:

./following-sibling::text()[1] -

Custom date/time format:

- Y.m.d

<a>s

separated by <br>s rather than a <ul> and <li>s, for example, leaves something to be desired (and makes it harder to scrape,

too!).

I continue to love this “killer feature” of FreshRSS, but I’m beginning to see how it could go further – I wish I had the free time to contribute to its development!

I’d love to see a mechanism for exporting/importing feed configurations like this so that I could share them more-easily, for example. I’d also be delighted if I could expand on my XPath rules to load pages referenced by the results and get data from them, too, e.g. so I could use an image found by XPath on the “item link” page as the thumbnail image! These are things RSSey could do for me, but FreshRSS can’t… yet!

Ideal #2 (SMBC)

This is a repost promoting content originally published elsewhere. See more things Dan's reposted.

The SMBC comic that came out this weekend was perhaps the best birthday present the Internet could provide.

The Far Side in FreshRSS

A few yeras ago, I wanted to subscribe to The Far Side‘s “Daily Dose” via my RSS reader. The Far Side doesn’t have an RSS feed, so I implemented a proxy/middleware to bridge the two.

![Browser debugger running document.evaluate('//li[@class="blog__post-preview"]', document).iterateNext() on Beverley's weblog and getting the first blog entry.](https://bcdn.danq.me/_q23u/2022/09/debugger-select-from-xpath-1024x256.png)

It turns out that FreshRSS’s XPath Scraping is almost enough to achieve exactly what I want. The big problem is that the image server on The Far Side website tries to

prevent hotlinking by checking the Referer: header on requests, so we need a proxy to spoof that. I threw together a quick PHP program to act as a proxy (if

you don’t have this, you’ll have to click-through to read each comic), then configured my FreshRSS feed as follows:

-

Feed URL:

https://www.thefarside.com/

The “Daily Dose” gets published to The Far Side‘s homepage each day. -

XPath for finding new items:

//div[@class="card tfs-comic js-comic"]

Finds each comic on the page. This is probably a little over-specific and brittle; I should probably switch to using thecontainsfunction at some point. I subsequently have to useparent::andancestor::selectors which is usually a sign that your screen-scraping is suboptimal, but in this case it’s necessary because it’s only at this deep level that we start seeing really specific classes. -

Item title:

concat("Far Side #", parent::div/@data-id)

The comics don’t have titles (“The one with the cow”?), but these seem to have unique IDs in thedata-idattribute of the parent<div>, so I’m using those as a reference. -

Item content:

descendant::div[@class="card-body"]

Within each item, the<div class="card-body">contains the comic and its text. The comic itself can’t be loaded this way for two reasons: (1) the<img src="...">just points to a placeholder (the site uses JavaScript-powered lazy-loading, ugh – the actual source is in thedata-srcattribute), and (2) as mentioned above, there’s anti-hotlink protection we need to work around. -

Item link:

descendant::input[@data-copy-item]/@value

Each comic does have a unique link which you can access by clicking the “share” button under it. This makes a hidden text<input>appear, which we can identify by the presence of thedata-copy-itemattribute. The contents of this textbox is the sharing URL for the comic. -

Item thumbnail:

concat("https://example.com/referer-faker.php?pw=YOUR-SECRET-PASSWORD-GOES-HERE&referer=https://www.thefarside.com/&url=", descendant::div[@class="tfs-comic__image"]/img/@data-src)

Here’s where I hook into my special proxy server, which spoofs theReferer:header to work around the anti-hotlinking code. If you wanted you might be able to come up with an alternative solution using a custom JavaScript loaded into your FreshRSS instance (there’s a plugin for that!), perhaps to load an iframe of the sharing URL? Or you can host a copy of my proxy server yourself (you can’t use mine, it’s got a password and that password isn’tYOUR-SECRET-PASSWORD-GOES-HERE!) -

Item date:

ancestor::div[@class="tfs-page__full tfs-page__full--md"]/descendant::h3

There’s nothing associating each comic with the date it appeared in the Daily Dose, so we have to ascend up to the top level of the page to find the date from the heading. -

Item unique ID:

parent::div/@data-id

Giving FreshRSS a unique ID can help it stop showing duplicates. We use the unique ID we discovered earlier; this way, if the Daily Dose does a re-run of something it already did since I subscribed, I won’t be shown it again. Omit this if you want to see reruns.

There’s a moral to this story: when you make your website deliberately hard to consume, fewer people will access it in the way you want! The Far Side‘s website is actively hostile to users (JavaScript lazy-loading, anti-right click scripts, hotlink protection, incorrect MIME types, no feeds etc.), and an inevitable consequence of that is that people like me will find and share workarounds to that hostility.

If you’re ad-supported or collect webstats and want to keep traffic “on your site” on this side of 2004, you should make it as easy as possible for people to subscribe to content. Consider The Oatmeal or Oglaf, for example, which offer RSS feeds that include only a partial thumbnail of each comic and a link through to the full thing. I don’t feel the need to screen-scrape those sites because they’ve given me a subscription option that works, and I routinely click-through to both of them to enjoy their latest content!

Conversely, the Far Side‘s aggressive anti-subscription technology ultimately means that there are fewer actual visitors to their website… because folks like me work to circumvent them.

And now you know how I did so.

Update: want the new content that’s being published to The Far Side in FreshRSS, too? I’ve got a recipe for that!

Note #20099

Almost nerdsniped myself when I discovered several #WordPress plugins that didn’t quite do what I needed. Considered writing an overarching one to “solve” the problem. Then I remembered @xkcd comic 927…

![Comic comparing 'Devs Then' to 'Devs Now'. The 'Devs Then' are illustrated as muscular men, with captions 'Writes code without AI or Stack Overflow', 'Builds entire games in Assembly', 'Crafts mission-critical code fo [sic] Moon landing', and 'Fixes memory leaks by tweaking pointers'. The 'Devs Now' are illustrated with badly-drawn, somewhat-stupid-looking faces and captioned 'Googles how to center a div in 2025?', 'ChatGPT please fix my syntax error', 'Cannot exit vim', and 'Fixes one bug, creates three new ones'.](https://bcdn.danq.me/_q23u/2025/02/devs-then-devs-now-lieo-640x601.jpg)