This weekend, my sister Sarah challenged me to define the difference between Virtual Reality and Augmented Reality. And the more I talked about the differences between them, the more I realised that I don’t have a concrete definition, and I don’t think that anybody else does either.

VR: the man sees simulated reality only, which may or may not include a whiteboard.

Either way: what the hell is he doing?

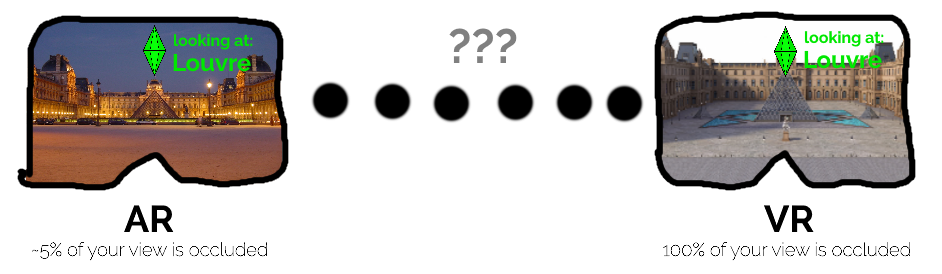

After all: from a technical perspective, any fully-immersive AR system – for example a hypothetical future version of the Microsoft Hololens that solves the current edition’s FOV problems – exists in a theoretical superset of any current-generation VR system. That AR augments the reality you can genuinely see, rather than replacing it entirely, becomes irrelevant if that AR system could superimpose a virtual environment covering your entire view. So the argument that compared to VR, AR only covers part of your vision is not a reliable definition of the difference.

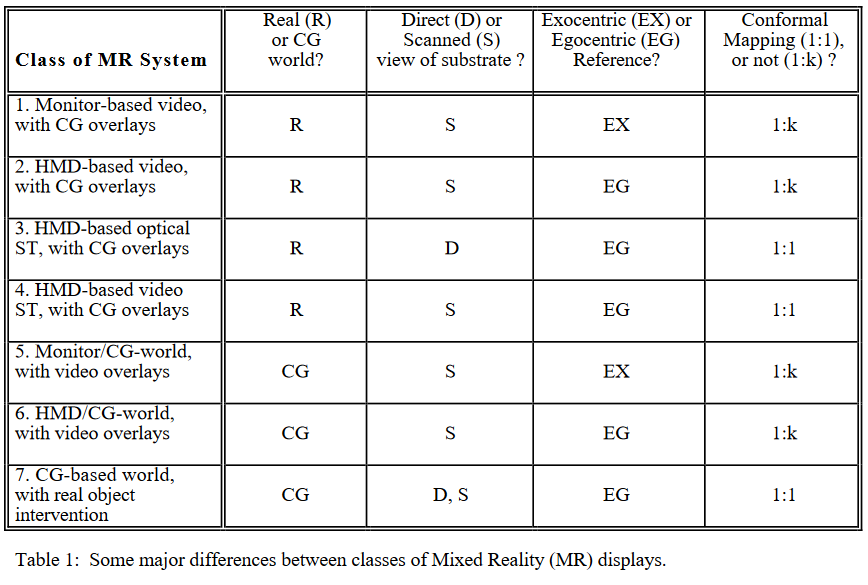

This isn’t a new conundrum. Way back in 1994 back when the Sega VR-1 was our idea of cutting edge, Milgram et al. developed a series of metaphorical spectra to describe the relationship between different kinds of “mixed reality” systems. The core difference, they argue, is whether or not the computer-generated content represents a “world” in itself (VR) is just an “overlay” (AR).

But that’s unsatisfying for the same reason as above. The HTC Vive headset can be configured to use its front-facing camera(s) to fade seamlessly from the game world to the real world as the player gets close to the boundaries of their play space. This is a safety feature, but it doesn’t have to be: there’s no reason that a HTC Vive couldn’t be adapted to function as what Milgram would describe as a “class 4” device, which is functionally the same as a headset-mounted AR device. So what’s the difference?

You might argue that the difference between AR and VR is content-based: that is, it’s the thing that you’re expected to focus on that dictates which is which. If you’re expected to look at the “real world”, it’s an augmentation, and if not then it’s a virtualisation. But that approach fails to describe Google’s tech demo of putting artefacts in your living room via augmented reality (which I’ve written about before), because your focus is expected to be on the artefact rather than the “real world” around it. The real world only exists to help with the interpretation of scale: it’s not what the experience is about and your countertop is as valid a real world target as the Louvre: Google doesn’t care.

Many researchers echo Milgram’s idea that what turns AR into VR is when the computer-generated content completely covers your vision.

But even if we accept this explanation, the definition gets muddied by the wider field of “extended reality” (XR). Originally an umbrella term to cover both AR and VR (and “MR“, if you believe that’s a separate and independent thing), XR gets used to describe interactive experiences that cover other senses, too. If I play a VR game with real-world “props” that I can pick up and move around, but that appear differently in my vision, am I not “augmenting” reality? Is my experience, therefore, more or less “VR” than if the interactive objects exist only on my screen? What about if – as in a recent VR escape room I attended – the experience is enhanced by fans to simulate the movement of air around you? What about smell? (You know already that somebody’s working on bridging virtual reality with Smell-O-Vision.)

Increasingly, then, I’m beginning to feel that XR itself is a spectrum, and a pretty woolly one. Just as it’s hard to specify in a concrete way where the boundary exists between being asleep and being awake, it’s hard to mark where “our” reality gives way to the virtual and vice-versa.

It’s based upon the addition of information to our senses, by a computer, and there can be more (as in fully-immersive VR) or less (as in the subtle application of AR) of it… but the edges are very fuzzy. I guess that the spectrum of the visual experience of XR might look a little like this:

Honestly, I don’t know any more. But I don’t think my sister does either.