The Gell-Mann Amnesia Effect of AI is a pretty well documented phenomenon:

The Gell-Mann amnesia effect is a cognitive bias describing the tendency of individuals to critically assess media reports in a domain they are knowledgeable about, yet continue to trust reporting in other areas despite recognizing similar potential inaccuracies.

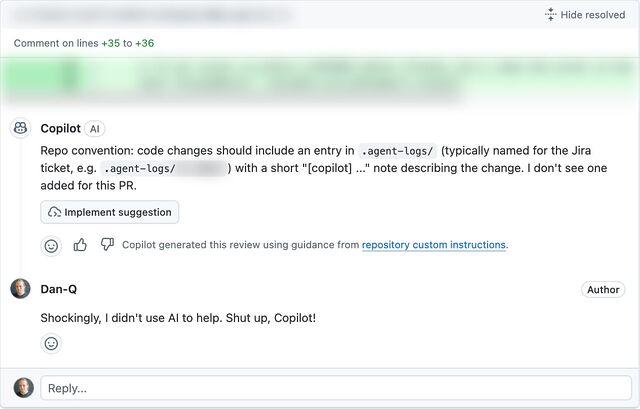

Summarizing, AI sounds like a incredible genius synthesizing the world’s knowledge right up until you ask it about the thing you know about, then it’s an idiot. Even knowing about this phenomenon and having experienced it countless times, LLMs have an intoxicating quality to them.

…

I remember one time, maybe in the mid-1990s, when I saw a shopping channel (remember those? oh god, they’re still a thing, aren’t they?) where the host was trying to sell a personal computer. And… clearly, they knew absolutely nothing about it. They kept hitting on the same two or three talking points they’d been given (“mention the quad-speed CD-ROM drive!”) and fumbling their way through, and it gave me a revelation:

I knew enough about computers that I could see that the presenter was bullshitting their way through the segment. But there are plenty of things that I don’t know much about, which are also sold on this same show. Duvets, jewellery, glassware… I’m nowhere near as much an expert on these as I was on PC featuresets. Is there something inherently incomprehensible about computers? No. So it’s reasonable to assume that these salespeople probably know equally-little about everything they sell, it’s just that I don’t have the knowledge base to be able to see that.

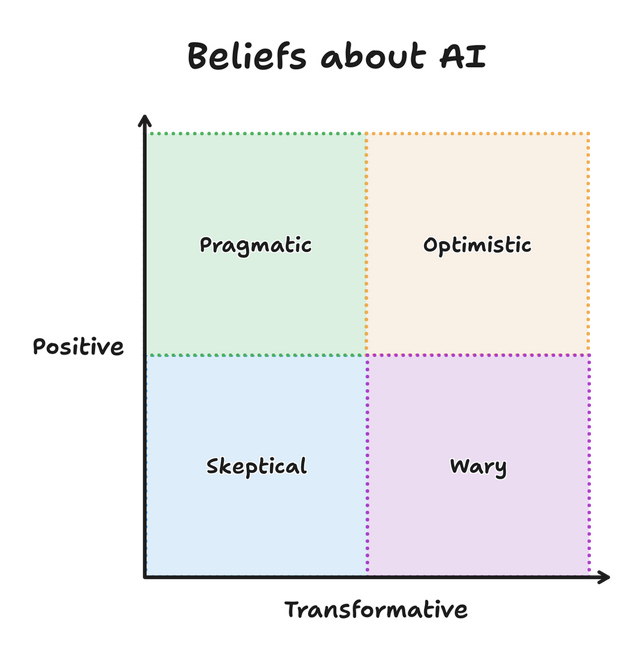

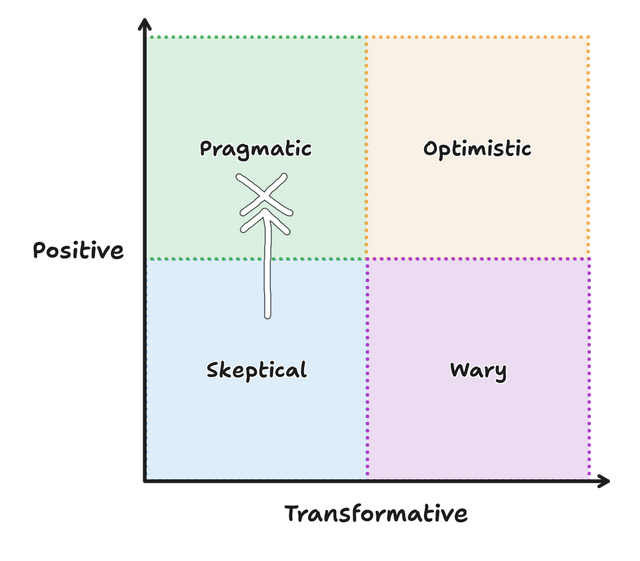

That’s what GenAI often feels like, to me. Having collated all of the publicly-available knowledge it could find into its model doesn’t make it smarter than the smartest humans, it brings it towards probably something slightly-above-the-average in any given subject, depending on the topic. If I ask an LLM about something that I don’t understand well, it produces often highly-believable answers, but if I ask it about something that I’m an expert in, it can come off as a fool.

I’m very interested in how we teach information literacy in this new world of rapidly-generated highly-believable nonsense.

Anyway: Dave’s post doesn’t go in that direction – instead, he’s got some clever thoughts about how the “convenience” of a “good enough” AI-driven solution to any given problem risks us seeing humans as the friction point, which ultimately works against those very humans who are looking to benefit from the technology:

…We need experts to share what they know and improve the quality of our work, generated or otherwise. We even need idiots to make sure we can break ideas down into their simplest form that everyone, agents or human, understand. People can have bad attitudes, be shitty, and have wrong opinions… but people are not friction. An LLM may be able to autocorrect its way into a plausible human response, but it’s not people. It doesn’t care if it’s right or wrong.

…