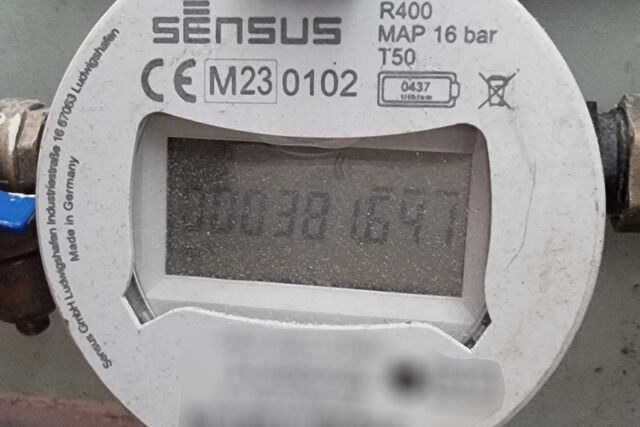

When we picked up the keys to the rental house we’ll be living in while our home is repaired following the flood that forced us to evacuate, I took an initial meter reading then got in touch with super-reputable water company Thames Water to let them know the situation.

Unfortunately, Thames Water had fucked-up1 and created an account for us already with the wrong information2, so by the time I’d reached out to them they were already getting themselves into a pickle.

It turns out that, presumably because of some shortsightedness on the part of their software engineers, their computer systems wouldn’t let them change the information to correct the problem. Nor could they simply delete the account and create a new one3. Instead, the had to close the account they’d erroneously set up such that the start and end date of the contract was our moving-in date… and then set up a new account starting from the day after.

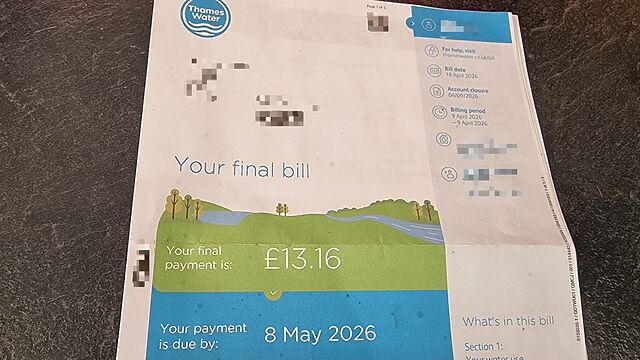

Sigh. Fine! So long as it’s sorted, I didn’t even care. Until, that is, the bill arrived for the one day of the first (incorrectly-created) contract:

That bill:

- is for £13.16.

- covers “9 April 2026 through 9 April 2026”, i.e. one day.

- (which means that our estimated annual bill would be £4,803.40 (£13.16 × 365) – about eight times the national average)

- states that our account closure was/will be “04/09/2026” – the only date on the letter that’s in “short” date format and which would appear to be 4 September (in UK date format) even though 9 April would make more sense (but would require interpreting it in US date format, which would make no sense).

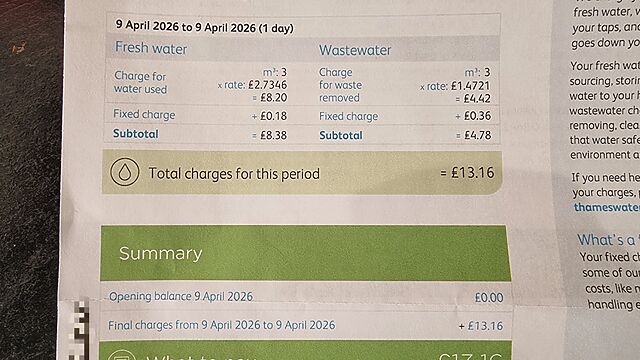

Let’s see how that breaks down:

According to this bill, we used three cubic metres of water between collecting the keys (at around 1pm), moving in, and taking a meter reading… and the end of the day. That’s three thousand litres of water.

Is it possible to achieve that level of water usage in the nine hours of billable time that this bill covers? I guess, if you really tried, you could:

- completely fill and then drain our 100-litre bathtub, three times an hour, taking a five-and-a-half minute bath in each before draining it again, for the rest of the day4; or

- run the kitchen tap – the highest-pressure tap in the house – continuously for six hours and forty minutes; or

- repeatedly flush all three toilets, on “full-flush” mode, once every 79 seconds until midnight5, for example.

Obviously this is all ridiculous. I’m being ridiculous.

But then again, so is this bill, which claims that three adults spent 11 hours in a house and somehow used the amount of water that’s the recommended amount to drink in a day… by 1,500 adults. Despite the water being shut-off to install a shower and toilet for some of that time.

But then again, so is Thames Water’s computer system, which disallows the correction of mistakes even by their own staff and instead requires the creation of one-day contracts. And also can’t decide which country’s date format to use. And, possibly, doesn’t allow them to obey data protection laws.

The whole thing’s ridiculous. Which I’ll be letting Thames Water know. Let’s see if they agree.

Footnotes

1 This may be no surprise to anybody who’s ever dealt with Thames Water before, or who follows the news about their seemingly endless inability to keep clean water in its pipes and raw sewage out of our rivers, for example, while taking out loans in order to pay bonuses to their self-back-patting executives.

2 They used information provided to them by the estate agent and failed to connect it to the information they already had for us… which thanks to quirks of their information systems resulted in bigger problems down the line. Amusingly – and for a change! – none of the problems were related to my unusual name, this time around.

3 Curiously, these initial mistakes on the part of Thames Water left them processing personal information about me – an email address – that I’d never given to them, and allegedly unable to delete or correct it for six months after being asked to. This is the kind of thing that normally gives me an excuse for a field day of DPA2018-related letter writing, but this time around I’ve been too busy dealing with the bigger problems they’ve created to have a chance to stop and think about that: that’s how much of a mess they’ve made.

4 It’s only barely possible to repeatedly fill the bath this quickly, you need to use both hot and cold water: the cold inlet alone doesn’t have the pressure to fill it fast enough, but the hot water tank has its own separate inlet which makes all the difference. Also, a cold bath would suck, even if you’re only allowed five minutes in it before it’s time to drain the tub and start filling it again.

5 I once had a really rough night after a particularly dodgy curry, but I’ve never needed to be flushing a toilet twice a minute for eleven hours.