Mostly for my own benefit, as most other guides online are outdated, here’s my set-up for intercepting TLS-encrypted communications from an emulated Android device (in Android Emulator) using Fiddler. This is useful if you want to debug, audit, reverse-engineer, or evaluate the security of an Android app. I’m using Fiddler 5.0 and Android Studio 2.3.3 (but it should work with newer versions too) to intercept connections from an Android 8 (Oreo) device using Windows. You can easily adapt this set-up to work with physical devices too, and it’s not hard to adapt these instructions for other configurations too.

1. Configure Fiddler

Install Fiddler and run it.

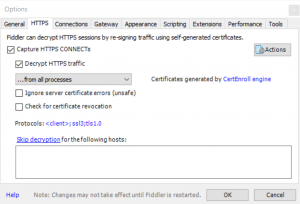

Under Tools > Options > HTTPS, enable “Decrypt HTTPS traffic” and allow a root CA certificate to be created.

Click Actions > Export Root Certificate to Desktop to get a copy of the root CA public key.

On the Connections tab, ensure that “Allow remote computers to connect” is ticked. You’ll need to restart Fiddler after changing this and may be prompted to grant it additional permissions.

If Fiddler changed your system proxy, you can safely change this back (and it’ll simplify your output if you do because you won’t be logging your system’s connections, just the Android device’s ones). Fiddler will complain with a banner that reads “The system proxy was changed. Click to reenable capturing.” but you can ignore it.

2. Configure your Android device

Install Android Studio. Click Tools > Android > AVD Manager to get a list of virtual devices. If you haven’t created one already, create one: it’s now possible to create Android devices with Play Store support (look for the icon, as shown above), which means you can easily intercept traffic from third-party applications without doing APK-downloading hacks: this is great if you plan on working out how a closed-source application works (or what it sends when it “phones home”).

In

Android’s Settings > Network & Internet, disable WiFi. Then, under Mobile Network > Access Point Names > {Default access point, probably T-Mobile} set Proxy to the local IP

address of your computer and Port to 8888. Now all traffic will go over the virtual cellular data connection which uses the proxy server you’ve configured in Fiddler.

In

Android’s Settings > Network & Internet, disable WiFi. Then, under Mobile Network > Access Point Names > {Default access point, probably T-Mobile} set Proxy to the local IP

address of your computer and Port to 8888. Now all traffic will go over the virtual cellular data connection which uses the proxy server you’ve configured in Fiddler.

Drag the root CA file you exported to your desktop to your virtual Android device. This will automatically copy the file into the virtual device’s “Downloads” folder (if you’re using a physical device, copy via cable or network). In Settings > Security & Location > Encryption & Credentials > Install from SD Card, use the hamburger menu to get to the Downloads folder and select the file: you may need to set up a PIN lock on the device to do this. Check under Trusted credentials > User to check that it’s there, if you like.

Test your configuration by visiting a HTTPS website: as you browse on the Android device, you’ll see the (decrypted) traffic appear in Fiddler. This also works with apps other than the web browser, of course, so if you’re reverse-engineering a API-backed application encryption then encryption doesn’t have to impede you.

3. Not working? (certificate pinning)

A small but increasing number of Android apps implement some variation of built-in key pinning, like HPKP but usually implemented in the application’s code (which is fine, because most people auto-update their apps). What this does is ensures that the certificate presented by the server is signed by a certification authority from a trusted list (a trusted list that doesn’t include Fiddler’s CA!). But remember: the app is running on your device, so you’re ultimately in control – FRIDA’s bypass script “fixed” all of the apps I tried, but if it doesn’t then I’ve heard good things about Inspeckage‘s “SSL uncheck” action.

Summary of steps

If you’re using a distinctly different configuration (different OS, physical device, etc.) or this guide has become dated, here’s the fundamentals of what you’re aiming to achieve:

- Set up a decrypting proxy server (e.g. Fiddler, Charles, Burp, SSLSplit – note that Wireshark isn’t suitable) and export its root certificate.

- Import the root certificate into the certificate store of the device to intercept.

- Configure the device to connect via the proxy server.

- If using an app that implements certificate pinning, “fix” the app with FRIDA or another tool.

Well that’s terrifying. I had no idea that adding a root certificate enabled man-in-the-middle like this. I had assumed that the root cert would have to be in the cert chain for the specific site to be intercepted. I’ve installed certs for employers before, but I had no idea they could intercept encrypted traffic for domains other than the work domains.

Do apps that worry about this kind of attack do additional encryption on top of using https?

Indeed, this is exactly what employer-installed root certificates are typically used for: to facilitate man-in-the-middle inspection of encrypted packets (usually in order to enforce network usage policies)! The root certificate that’s installed and marked as trusted is one with “Certificate Authority” purposes: i.e. it’s trusted for the purpose of signing other certificates, and then the man-in-the-middle tool can generate certificates as-required to intercept anything it likes.

There are a number of countermeasures to this, yes. Probably the most-effective countermeasure would be for the app to require that the certificate (or, more-likely, the upchain root signing certificate) has a particular fingerprint: the fingerprint is derived from the private key and so cannot be meaningfully spoofed. If the app requires that the certificate itself has a particular fingerprint then this is the most-brittle approach, because certificate changes/addition must be accompanied by an update to the app, but contemporary app store mechanics mean that this isn’t unfeasible. Demanding that the root certificate is one of a pre-validated set is exactly what most browsers these days do for Extended Validation (EV) certificates, by the way: so when a web browser (other than IE) shows you a “green address bar”, then you can be sure that the certificate was signed by one of the standard “trusted” root certificates included by that browser vendor: your employer can’t fake this unless they supply their own custom build of your browser (unless they have the support of a CA). However, that doesn’t help much if the user doesn’t know to look for an EV certificate on a particular site in the first place, because there’s no reason an intercepting proxy couldn’t simply add a non-EV one in its place when it intercepts.

Yes, an application could introduce another level of encryption on top, but that’d mostly be an example of “security through obscurity”, because an intercepting proxy targetting that specific application could (probably) still break-in. If it’s using asymmetric encryption of any kind then the same principle applies: if the fingerprint of the certificate (or the signing certificate, more-likely) isn’t verified, it can be spoofed by a proxy. Symmetric encryption doesn’t have this problem, but introduces a different one in its place: the cryptographic keys must be stored within the app itself and so can be extracted by reverse-engineering. This might provide a barrier to casual interception, but won’t prevent a determined attacker.

The correct approach, then, is fingerprint comparison – the same as browsers do (for EV certificates, at least). I’ve tried a dozen or so apps on my phone, though, and virtually none of them do this, so you chould consider that your mobile apps are as vulnerable as your web browsing is if you install a third-party CA certificate onto your device! If you’ve been asked by your employer to add a certificate to your trusted store it’s worth checking whether it’s one that’s permitted only to verify the identity of a particular domain or set of domains (which is probably okay) or whether it’s one that’s capable of signing other, arbritrary certificates (which is pretty alarming!).

(There are, of course, user-driven countermeasures too, like use of a “known good” VPN or SSH tunnel, but your question was about what app developers can do.)

Nice response!

This Article was mentioned on danq.me

Read more →

Great article.

I managed to intercept browsing traffic (inc. https) but I’m not able to do it with Apps. I don’t think its the pinning since I do not see any traffic coming from several apps. Do I need to root the emulator? Is that even possible?

Hi! It worked great!

Only one app doesn’t load anything. Maybe it blocks communication when it sees certificates like Fiddler’s?

How can I overcome that?

Tks