Get lost

I got lost on the Web this week, but it was harder than I’d have liked.

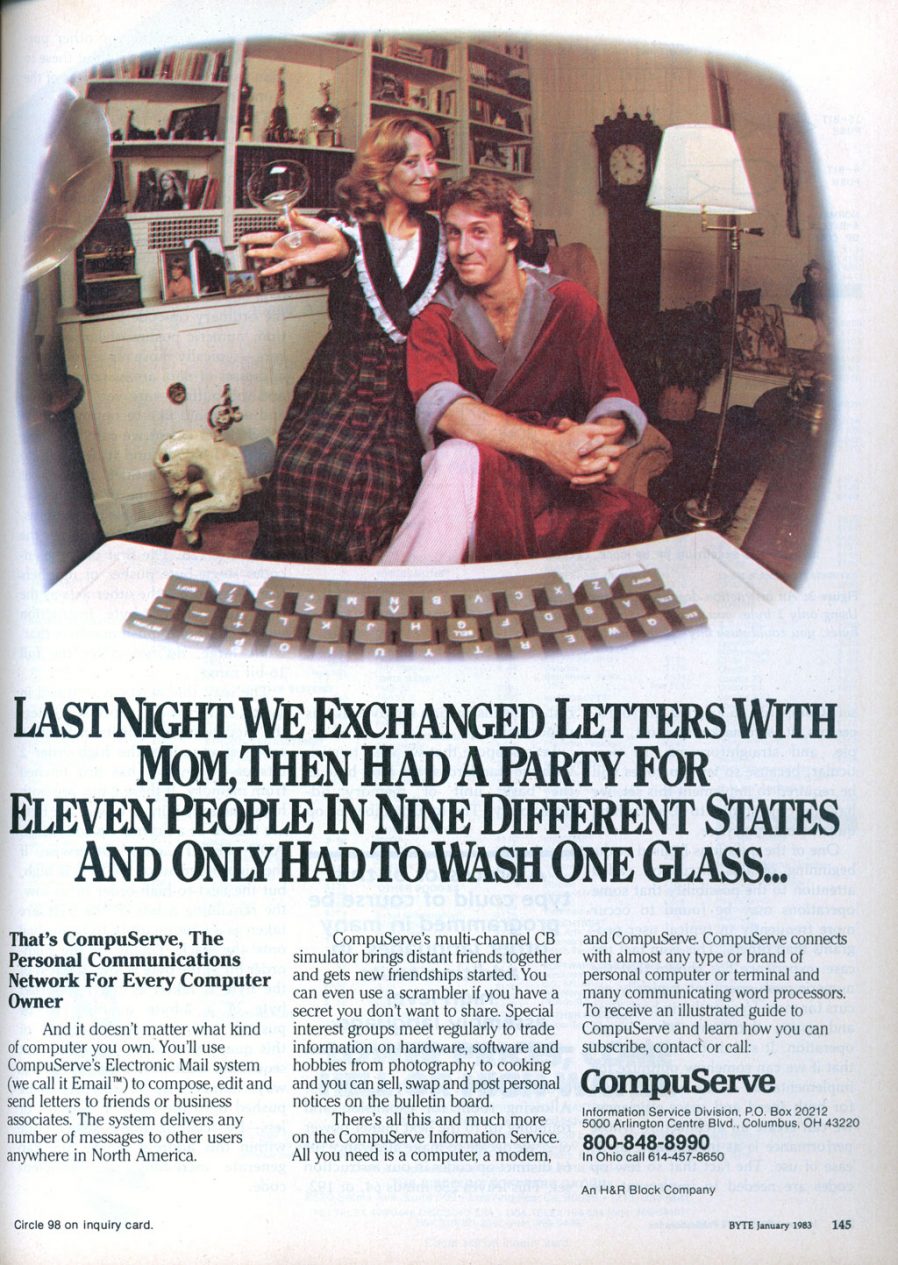

There was a discussion this week in the Abnib WhatsApp group about whether a particular illustration of a farm was full of phallic imagery (it was). This left me wondering if anybody had ever tried to identify the most-priapic buildings in the world. Of course towers often look at least a little bit like their architects were compensating for something, but some – like the Ypsilanti Water Tower in Michigan pictured above – go further than others.

I quickly found the Wikipedia article for the Most Phallic Building Contest in 2003, so that was my jumping-off point. It’s easy enough to get lost on Wikipedia alone, but sometimes you feel the need for a primary source. I was delighted to discover that the web pages for the Most Phallic Building Contest are still online 18 years after the competition ended!

Link rot is a serious problem on the Web, to such an extent that it’s pleasing when it isn’t present. The other year, for example, I revisited a post I wrote in 2004 and was pleased to find that a linked 2003 article by Nicholas ‘Aquarion’ Avenell is still alive at its original address! Contrast Jonathan Ames, the author/columnist/screenwriter who created the Most Phallic Building Contest until as late as 2011 before eventually letting his site and blog lapse and fall off the Internet. It takes effort to keep Web content alive, but it’s worth more effort than it’s sometimes given.

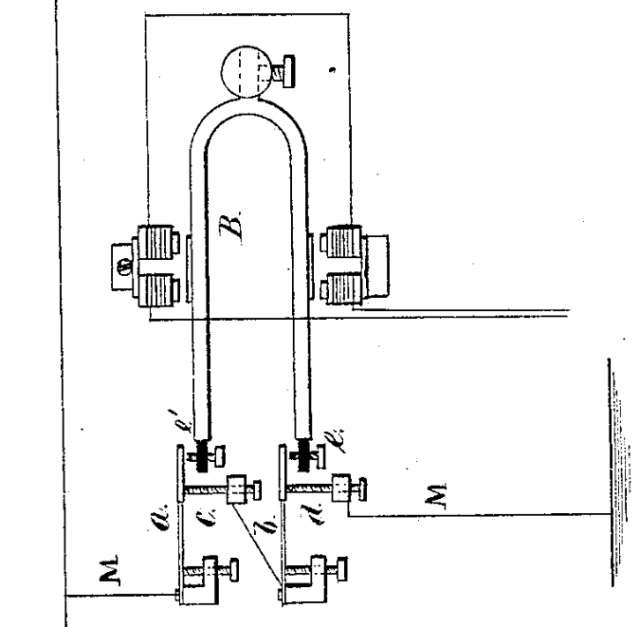

Anyway: a shot tower in Bristol – a part of the UK with a long history of leadworking – was among the latecomer entrants to the competition, and seeing this curious building reminded me about something I’d read, once, about the manufacture of lead shot. The idea (invented in Bristol by a plumber called William Watts) is that you pour molten lead through a sieve at the top of a tower, let surface tension pull it into spherical drops as it falls, and eventually catch it in a cold water bath to finish solidifying it. I’d seen an animation of the process, but I’d never seen a video of it, so I went about finding one.

British Pathé‘s YouTube Channel provided me with this 1950 film, and if you follow only one hyperlink from this article, let it be this one! It’s a well-shot (pun intended, but there’s a worse pun in the video!), and while I needed to translate all of the references to “hundredweights” and “Fahrenheit” to measurements that I can actually understand, it’s thoroughly informative.

But there’s a problem with that video: it’s been badly cut from whatever reel it was originally found on, and from about 1 minute and 38 seconds in it switches to what is clearly a very different film! A mother is seen shepherding her young daughter off to bed, and a voiceover says:

Bedtime has a habit of coming round regularly every night. But for all good parents responsibility doesn’t end there. It’s just the beginning of an evening vigil, ears attuned to cries and moans and things that go bump in the night. But there’s no reason why those ears shouldn’t be your neighbours ears, on occasion.

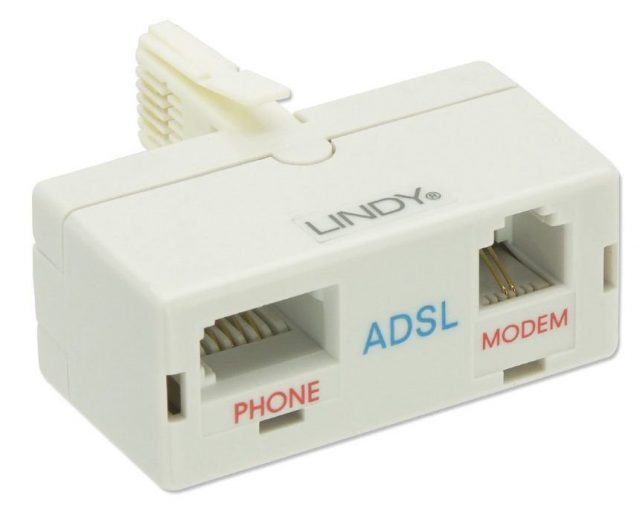

Now my interest’s piqued. What was this short film going to be about, and where could I find it? There’s no obvious link; YouTube doesn’t even make it easy to find the video uploaded “next” by a given channel. I manipulated some search filters on British Pathé’s site until I eventually hit upon the right combination of magic words and found a clip called Radio Baby Sitter. It starts off exactly where the misplaced prior clip cut out, and tells the story of “Mr. and Mrs. David Hurst, Green Lane, Coventry”, who put a microphone by their daughter’s bed and ran a wire through the wall to their neighbours’ radio’s speaker so they can babysit without coming over for the whole evening.

It’s a baby monitor, although not strictly a radio one as the title implies (it uses a signal wire!), nor is it groundbreakingly innovative: the first baby monitor predates it by over a decade, and it actually did use radiowaves! Still, it’s a fun watch, complete with its contemporary fashion, technology, and social structures. Here’s the full thing, re-merged for your convenience:

Wait, what was I trying to do when I started, again? What was I even talking about…

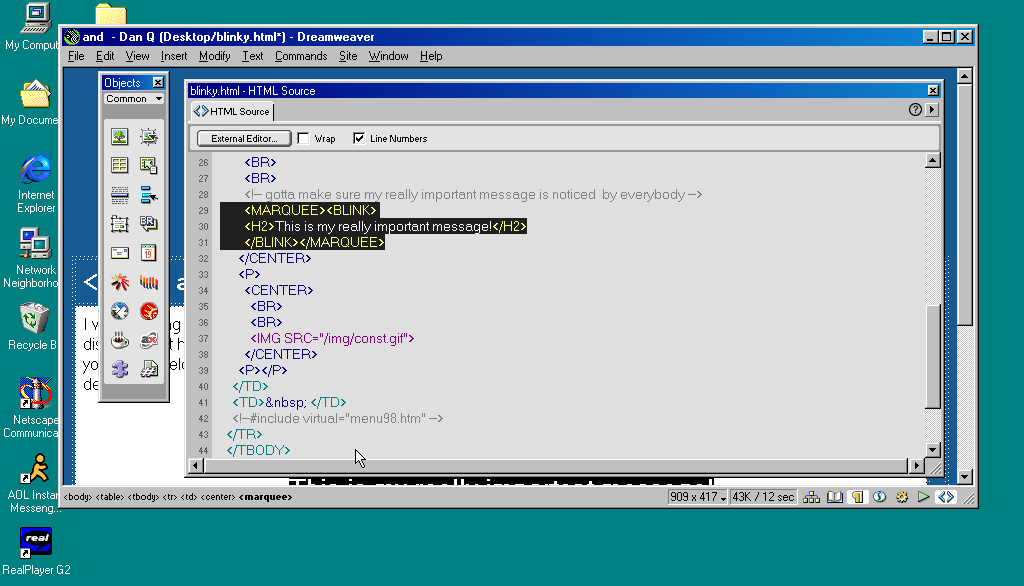

It’s harder than it used to be

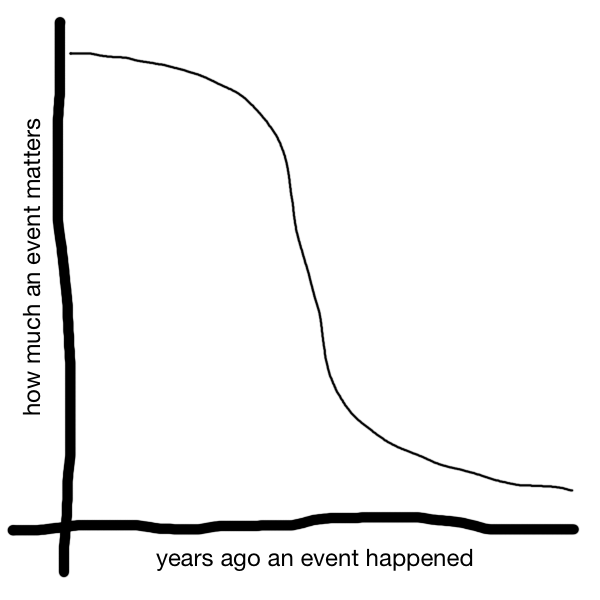

It used to be easier than this to get lost on the Web, and sometimes I miss that.

Obviously if you go back far enough this is true. Back when search engines were much weaker and Internet content was much less homogeneous and more distributed, we used to engage in this kind of meandering walk all the time: we called it “surfing” the Web. Second-generation Web browsers even had names, pretty often, evocative of this kind of experience: Mosaic, WebExplorer, Navigator, Internet Explorer, IBrowse. As people started to engage in the noble pursuit of creating content for the Web they cross-linked their sources, their friends, their affiliations (remember webrings? here’s a reminder; they’re not quite as dead as you think!), your favourite sites etc. You’d follow links to other pages, then follow their links to others still, and so on in that fashion. If you went round the circles enough times you’d start seeing all those invariably-blue hyperlinks turn purple and know you’d found your way home.

But even after that era, as search engines started to become a reliable and powerful way to navigate the wealth of content on the growing Web, links still dominated our exploration. Following a link from a resource that was linked to by somebody you know carried the weight of a “web of trust”, and you’d quickly come to learn whose links were consistently valuable and on what subjects. They also provided a sense of community and interconnectivity that paralleled the organic, chaotic networks of acquaintances people form out in the real world.

In recent times, that interpersonal connectivity has, for many, been filled by social networks (let’s ignore their failings in this regard for now). But linking to resources “outside” of the big social media silos is hard. These advertisement-funded services work hard to discourage or monetise activity that takes you off their platform, even at the expense of their users. Instagram limits the number of external links by profile; many social networks push for resharing of summaries of content or embedding content from other sources, discouraging engagement with the wider Web, Facebook and Twitter both run external links through a linkwrapper (which sometimes breaks); most large social networks make linking to the profiles of other users of the same social network much easier than to users anywhere else; and so on.

The net result is that Internet users use fewer different websites today than they did 20 years ago, and spend most of their “Web” time in app versions of websites (which often provide a better experience only because site owners strategically make it so to increase their lock-in and data harvesting potential). Truly exploring the Web now requires extra effort, like exercising an underused muscle. And if you begin and end your Web experience on just one to three services, that just feels kind of… sad, to me. Wasted potential.

It sounds like I’m being nostalgic for a less-sophisticated time on the Web (that would certainly be in character!). A time before we’d fully-refined the technology that would come to connect us in an instant to the answers we wanted. But that’s not exactly what I’m pining for. Instead, what I miss is something we lost along the way, on that journey: a Web that was more fun-and-weird, more interpersonal, more diverse. More Geocities, less Facebook; there’s a surprising thing to find myself saying.

Somewhere along the way, we ended up with the Web we asked for, but it wasn’t the Web we wanted.

![[Animated GIF] Puppy flumps onto a human.](https://bcdn.danq.me/_q23u/2019/09/cute-puppy.gif)