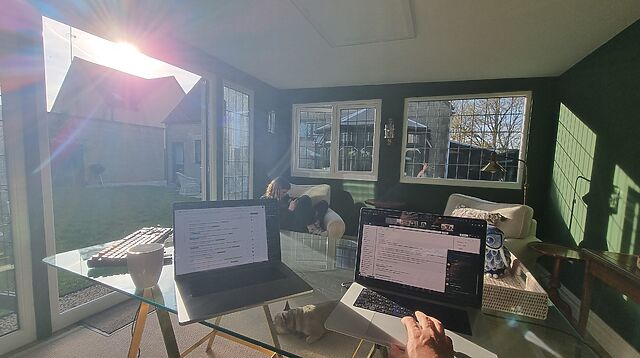

The dog is concerned. Why, despite all her warnings, am I still letting these men take all of our (surviving) furniture?

Blog

More than you expected?

You're reading everything on my blog - including, among other things:

-

notes (short, "Tweet-like" messages),

-

reposts (links to other people's work, sometimes with commentary),

-

checkins (records of places I've visited, often while geo*ing), and

-

videos ("vloggy" content).

That might be more than you wanted to see, if you're only interested in articles (traditional long-form blog posts). Click a link to filter.

F-Day plus 55

It’s fifty-five days since my house flooded. Since then, I’ve lived in hotels, with friends, on volunteering retreats and – mostly – in a series of one- or two-week AirBnB-style short-term lets. It’s been wild. It’s also been wildly disruptive. To our work. To our kids. To our general stability.

Today, we make a change. Today we’re moving into a medium-term let: sonewhere we can stay for the… say… six months or so it’ll take to actually repair our house so we can move back in. We’ll have our own space again in a way we haven’t in a couple of months.

I know the hard work isn’t done. Our house is still a wreck! But it feels like, perhaps, we’re beginning the second act of the three-act play “The Year Of The Flood”. And that feels like progress.

Right, I’d better go move house! (for like the seventh time this year…)

Indian Food

On our last day out at our current AirBnB, we searched for a takeaway.

Google Maps found me a Chinese takeaway, but it had an unexpected suggestion when I asked for an Indian:

Surprise Pig

It’s my final day in the cute garden office of the AirBnB we’re living in, this week, and every time I step through the door I catch a glimpse of our small, sandy-coloured dog squatting in the garden.

Except the dog isn’t even here. My brain keeps getting tricked… by this statue of a pig:

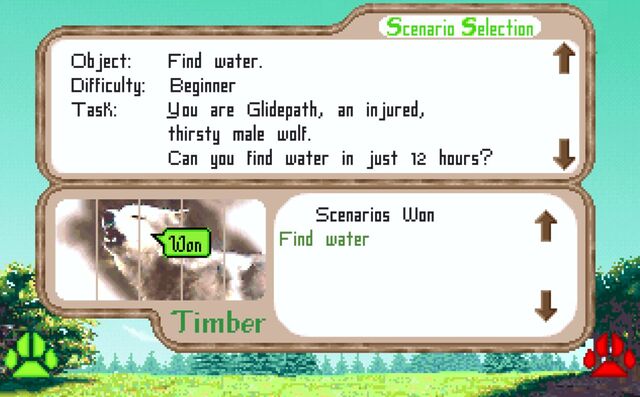

Garden Office

I’ve lived in a LOT of different places these last few months while we’ve been arranging a place to live for the next six months or so of our house repairs. Each new AirBnB has had its pros and cons (and each hasn’t felt like “home”).

But man, I really like the “garden office” at our current one. So nice to work in the sun!

(I don’t like the slow WiFi as much, but yeah… pros and cons!)

Tesco Assistnace

Letting Games Die

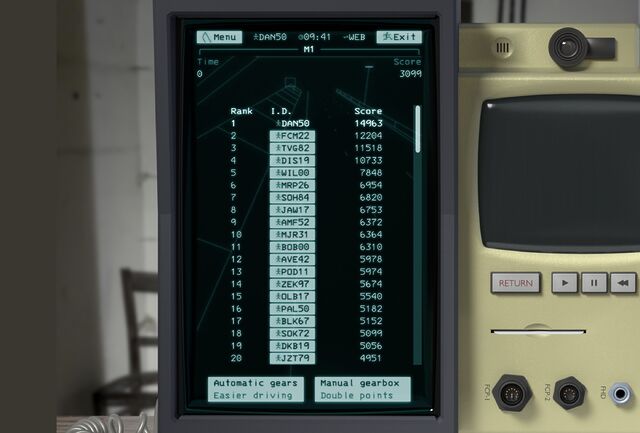

Letting code (and games) die

Mike Cook wrote a provocative blog post this weekend; an anti-preservationist argument for video games. The essence of his arguments seem to boil down to:

- Emphasising creation over preservation is liberating, as demonstrated by the imagination in the livecoding community.

- Archiving without intensive curation is building an emotional or intellectual safety net you never expect to be used.

- Digital preservation is a lossy process: effort spent on accurately preserving some media is at the expense of other media, whose lossy preservation paints in inaccurate picture of what is lost.

- Recreation, rather than strict preservation, ensures the continuity of the most culturally-important parts of games

He concludes to say:

60 games are released on Steam every day.

There are 294 game jams active on Steam as I write this.

Preserve nothing. Make more.

To make is to preserve.

Let games die.

Digital preservationism

Philosophically-speaking, there’s no doubt that I am a digital preservationist. I argue against unnecessary URI changes. I donate to The Internet Archive. Back at the Bodleian, I used to carve out free time from project work to spend time making sure the University’s “older” exhibition websites could be made to survive1. My approach to running out of hard drive space is to buy more hard drives. Even my blog retains content going back into the last millennium2!

But I like this kind of conversation. For World Digital Preservation Day a few years back I re-implemented Pong as a modern application but using retro controllers. Within its micro-exhibition, I used this as an excuse to get people to discuss what does it mean to preserve a videogame?

Similarly, back in 2021 I reverse-engineered and re-implemented “lost” piece of advertainment Axe Feather, mostly because I felt that a slightly-modernised version belonged in the “commons”.

This makes it seem like I’m very much on the side of recreation, rather than preservation, but that’s not the case. In both of these projects I started by disassembling the original works.

That I chose to make them accessible to a modern audience by reimplementation rather than by emulation was an artistic choice. I opted for lower fidelity by making something mildly-transformative. I chose to appeal to the widest possible audience, at the expense of presenting an experience that was totally in-keeping with the original.

But I couldn’t have done that without access to the originals. Had I recreated Pong from memory rather than from re-playing it, I’d have doubtless introduced inconsistencies that would have “felt wrong” to people whose memories of the game, while fundamentally accurate, differed from mine. Had I recreated Axe Feather without first coming up with a mechanism to extract and reformat the video clips in the original I’d have failed to tap into the specific nostalgia of some of its users, which was tied to the specific actor who performed in it3.

So I guess it’s important to me that somebody is preserving these things. So that I can use them to create new things. I stand for preservation for culture’s sake, so that I personally can enjoy the benefits for nostalgia’s sake.

But I get what Mike’s saying

For all that I feel like I’m making the case for “preserve everything; work out what’s important later”, Mike’s argument gives me an uncomfortable cognitive dissonance. Because I’ve also come to discover a joy in the ephemeral, too.

Increasingly, I’m okay with just taking the experience of something with me. It bothers me that my memory is fallible and that I can’t necessarily recreate a digital experience whose technology has been lost to time, but I am, for the most part, okay with it.

Some of the best gaming experiences I’ve ever had are impossible to “capture” in an archive anyway. They were conversations over the tabletop roleplaying table, or moments of tension resulting from a videogame’s emergent gameplay, or random occurrences unlikely to be replicated. Those get preserved in my memory alone, retold as stories with gradually-decreasing accuracy as new memories take their place.

That said…

Who decides what games get preserved?

I feel like the decision about what to preserve and how should be in the hands of the audience of a piece of art, not its creators. If a videogame (or film, or book, or whatever) is culturally-significant enough to warrant a high-fidelity preservation, it ought to be ultimately up to the members of that culture to make that decision!

Transport Tycoon Deluxe met that bar, and it’s possible to play both faithful recreations or modern reimplementations (the latter having excellent new features) courtesy of the OpenTTD project4.

But modern videogames are, perhaps, getting harder to preserve. Always-online features, insidious DRM, digital distribution, live updates, and games-as-a-service streaming all shift the balance of power more-firmly into the hands of publishers5 rather than players. It’s already hard to play a randomly-selected thirty-year-old videogame today; I reckon it’ll be almost impossible to do the same thirty years hence.

Saying “let games die” feels a bit like giving up to that inevitability. Like saying to the slimier publishers “it’s okay, we didn’t care about keeping that anyway” when they shut down servers or remotely kill games. I know that’s not what Mike’s saying, but it could be wilfully misinterpreted that way.

Anyway: I don’t have a nice conclusion to any of this. Just a lot of mixed-up feelings.

Footnotes

1 A policy which, since my departure, does not seem to have continued.

2 Even where those writings don’t really represent me well any more.

3 It turns out that, for a significant number of folks who are mostly younger-than-me, this advertisement represented a kind of sexual awakening, based on some of the comments and emails I’ve received about it!

4 Which I’ve also donated too. Turns out I’m happy to invest in both pure preservation and in spiritual-successor reimplementation!

5 Supposing that Sonic Rumble Party somehow wasn’t a catastrophic pay-to-win nightmare and somehow was deemed culturally-significant… how would you go about archiving it? Without Sega/Sonic Team’s consent, you’d be totally out of luck.

Easter Eggy Breakfast

Today I had a Cadburys’ Cream Egg… for breakfast.

I am a monster.

Dan Q found GC1FNVT SideTracked – Kingham

This checkin to GC1FNVT SideTracked - Kingham reflects a geocaching.com log entry. See more of Dan's cache logs.

Found successfully on an Easter morning dog walk from Bledington. The geopup and I came out across the fields, trying to get outdoors early before the rain that’s forecast for later in the day (though personally I’m sceptical that today’s weather will be anything short of glorious). The walk was delightful, although we unfortunately arrived right before a train was due and so there were many muggles waiting nearby to collect their arriving loved ones.

Fortunately the nature of the GZ provides a perfect excuse to be sitting around, and so I soon began my search. An initial lying-on-the-floor visual survey didn’t help, nor did a fingertip search through what I figured were the obvious spots. This, coupled with the lack of any logs yet this year and the fact that the last log was a DNF, might have given me cause to worry. But a deeper dive through the past logs indicated that perhaps this cache is just… hard!… and so I continued on.

The next trick in my caching toolbox was my phone. Switched to selfie mode and recording a video, I swept it slowly and methodically through all of the hiding spots I could think of. Watching the video back (while offering my patient pup scritches with my spare hand), I caught I glimpse of something: a flash of metal of an unexpected colour! Now I knew it was there! I renewed my search with fingertips focused on the spot I’d identified: an unusual area for this kind of cache, perhaps, but easy to find once you’re sure where to look!

Soon the cache was, at last, in hand. Careful patience, logical elimination, and a determination not to give up were the keys, here. TFTC!

F-Day plus 50

Dan Q found GC1ZEKG Church Micro 2809 – Bledington

This checkin to GC1ZEKG Church Micro 2809 - Bledington reflects a geocaching.com log entry. See more of Dan's cache logs.

QEF for the geohound and I as we came out for a walk from the house we’re borrowing this week – the latest of many AirBnB-like week-long lets we’ve had to decamp to after our house was rendered uninhabitable by a flash flood around fifty days ago. Hopefully the last, though, as the insurance company may at last have found us somewhere to live longer-term while our house is repaired!

Cache container seemed slightly exposed by damage to a nearby fence so I tucked it back in slightly deeper than I found it.

TFTC and for showing us this delightful footpath which is sure to become a favourite walking route for the doggo and I during our week here.

Dan Q did not find GC4M7C4 Scouting About

This checkin to GC4M7C4 Scouting About reflects a geocaching.com log entry. See more of Dan's cache logs.

The elder geokid and I had a search in all the likely spots we could find after attending her cousin’s 2nd birthday party nearby. No luck for us today!

Dan Q did not find GCARTHJ Knock Knock, Who’s There!

This checkin to GCARTHJ Knock Knock, Who's There! reflects a geocaching.com log entry. See more of Dan's cache logs.

Ran out of time and had to give up. Nice view of our accommodation for this week from this hill, though!

Predictions in a Hat

Two decades ago this month my friend Matt posted five predictions about the future of the world. I’ve revisited these predictions twice since: ten years later and twenty years later, and “scored” his predictions both times.

I love that the Web’s memory (and the persistence of URLs) makes this kind of long-term conversation possible.

Dan Q found GCARTHE Rope Swing

This checkin to GCARTHE Rope Swing reflects a geocaching.com log entry. See more of Dan's cache logs.

Found without difficulty while the geokid amused himself on the swing. Shame about the litter near the GZ, may be a CITO opportunity up here! Loving the views: think I can see our accommodation from here! TFTC and for showing us this rope swing!

![Photo of a self-checkout screen reading "Sorry, we don't recognise this Clubcard. Try again or call for assistnace." [sic]](https://bcdn.danq.me/_q23u/2026/04/1000134048-640x384.jpg)