Most of the traffic I get on this site is bots – it isn’t even close. And, for whatever reason, almost all of the bots are using HTTP1.1 while virtually all human traffic is using later protocols.

I have decided to block v1.1 traffic on an experimental basis. This is a heavy-handed measure and I will probably modify my approach as I see the results.

…

# Return an error for clients using http1.1 or below - these are assumed to be bots @http-too-old { not protocol http/2+ not path /rss.xml /atom.xml # allow feeds } respond @http-too-old 400 { body "Due to stupid bots I have disabled http1.1. Use more modern software to access this site" close }This is quick, dirty, and will certainly need tweaking but I think it is a good enough start to see what effects it will have on my traffic.

…

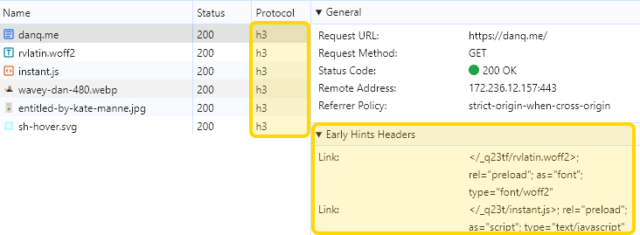

A really interesting experiment by Andrew Stephens! And love that he shared the relevant parts of his Caddyfile: nice to see how elegantly this can be achieved.

I decided to probe his server with cURL:

~ curl --http0.9 -sI https://sheep.horse/ | head -n1

HTTP/2 200

~ curl --http1.0 -sI https://sheep.horse/ | head -n1

HTTP/1.0 400 Bad Request

~ curl --http1.1 -sI https://sheep.horse/ | head -n1

HTTP/1.1 400 Bad Request

~ curl --http2 -sI https://sheep.horse/ | head -n1

HTTP/2 200

Curiously, while his configuration blocks both HTTP/1.1 and HTTP/1.0, it doesn’t seem to block HTTP/0.9! Whaaa?

It took me a while to work out why this was. It turns out that cURL won’t do HTTP/0.9 over https:// connections. Interesting! Though it presumably wouldn’t have worked anyway – HTTP/1.1 requires (and HTTP/1.0 permits) the Host: header, but HTTP/0.9 doesn’t IIRC, and sheep.horse definitely does require the Host: header (I tested!).

I also tested that my RSS reader FreshRSS was still able to fetch his content. I have it configured to pull not only the RSS feed, which is specifically allowed to bypass his restriction, but – because his feed contains only summary content – I also have it fetch the linked page too in order to get the full content. It looks like FreshRSS is using HTTP/2 or higher, because the content fetcher still behaves properly.

Andrew’s approach definitely excludes Lynx, which is a bit annoying and would make this idea a non-starter for any of my own websites. But it’s still an interesting experiment.